What’s in a camera? A lens, a shutter, a light-sensitive surface and, increasingly, a set of highly sophisticated algorithms. While the physical components are still improving bit by bit, Google, Samsung and Apple are increasingly investing in (and showcasing) improvements wrought entirely from code. Computational photography is the only real battleground now.

The reason for this shift is pretty simple: Cameras can’t get too much better than they are right now, or at least not without some rather extreme shifts in how they work. Here’s how smartphone makers hit the wall on photography, and how they were forced to jump over it.

Not enough buckets

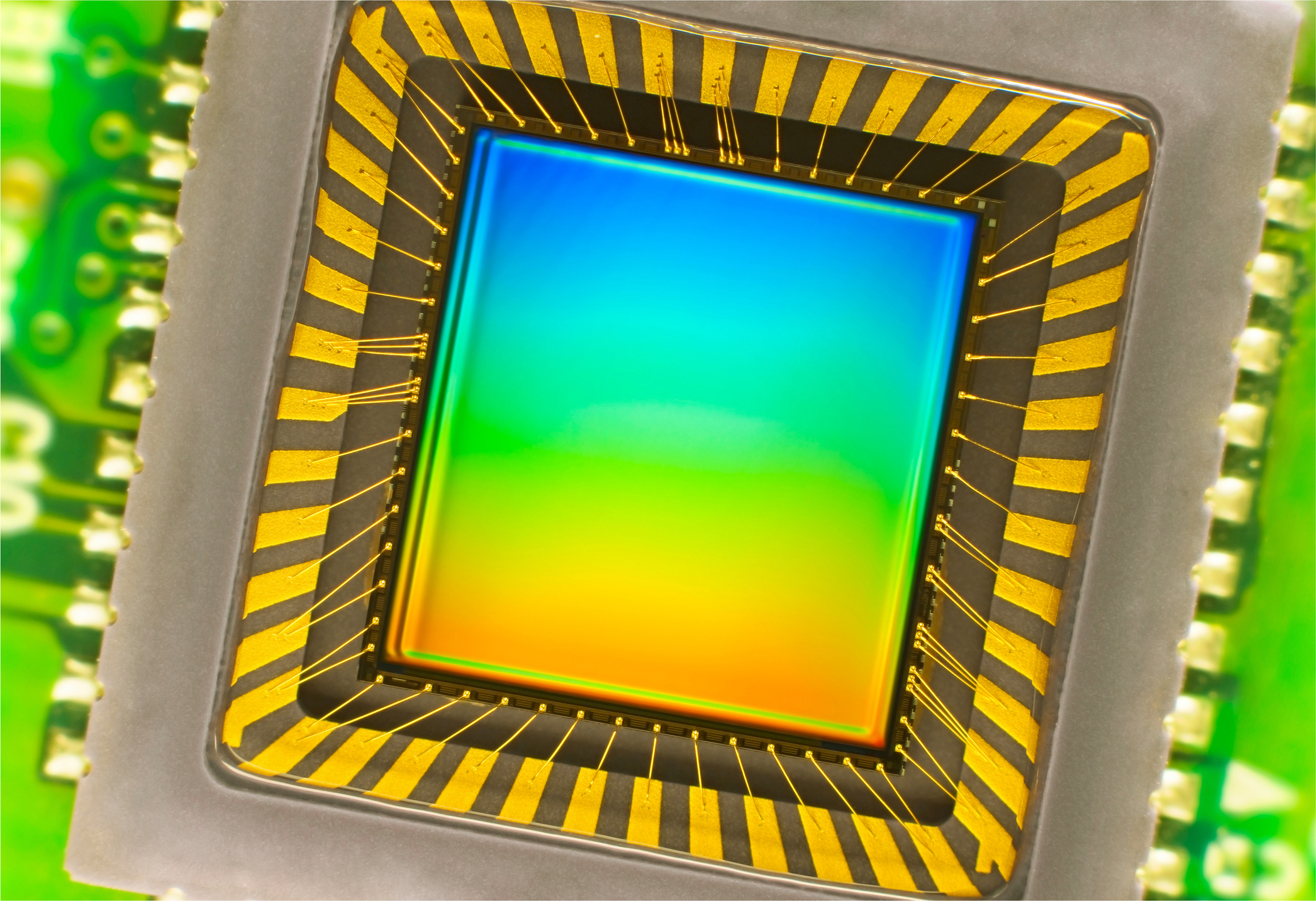

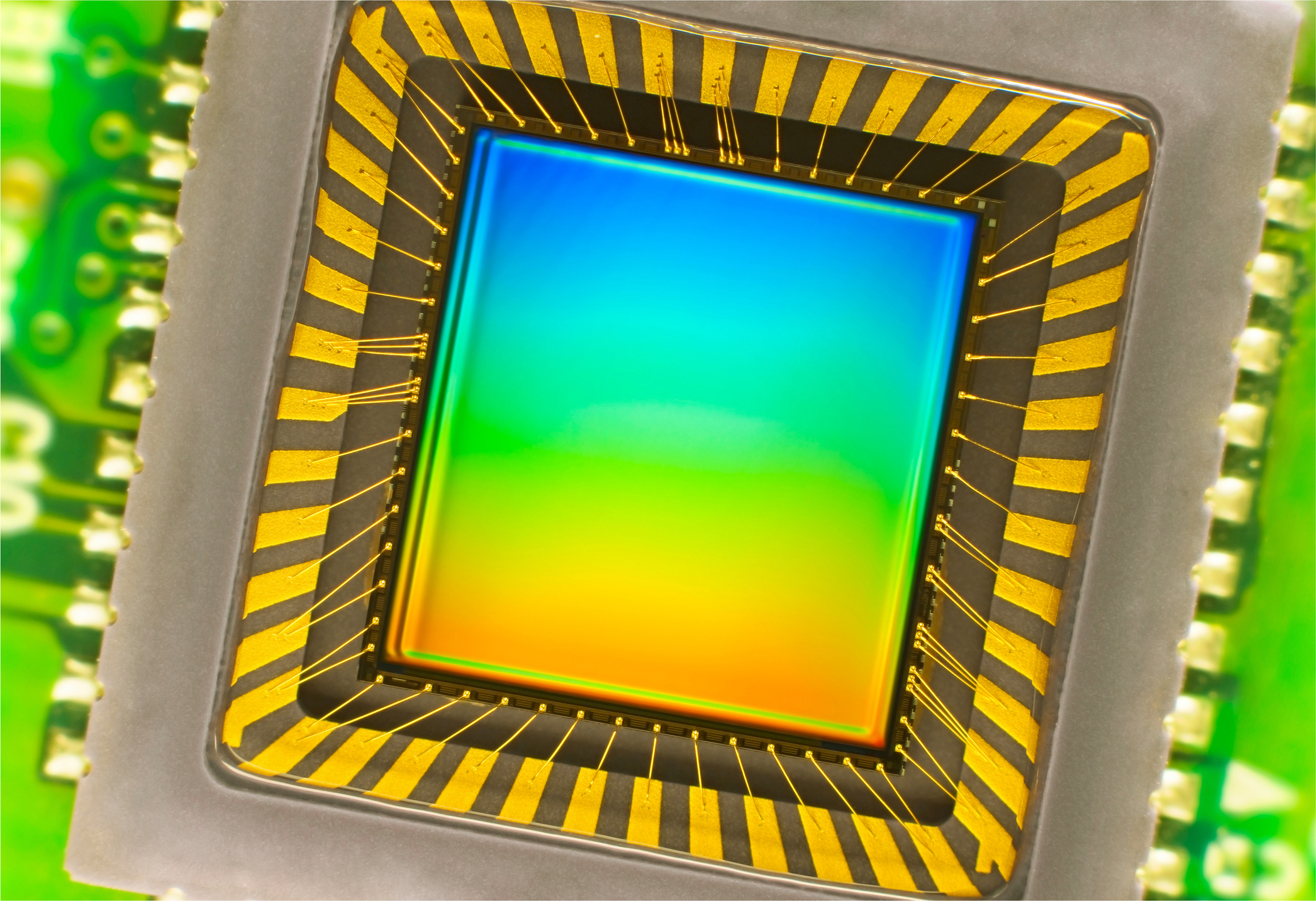

An image sensor one might find in a digital camera

The sensors in our smartphone cameras are truly amazing things. The work that’s been done by the likes of Sony, OmniVision, Samsung and others to design and fabricate tiny yet sensitive and versatile chips is really pretty mind-blowing. For a photographer who’s watched the evolution of digital photography from the early days, the level of quality these microscopic sensors deliver is nothing short of astonishing.

But there’s no Moore’s Law for those sensors. Or rather, just as Moore’s Law is now running into quantum limits at sub-10-nanometer levels, camera sensors hit physical limits much earlier. Think about light hitting the sensor as rain falling on a bunch of buckets; you can place bigger buckets, but there are fewer of them; you can put smaller ones, but they can’t catch as much each; you can make them square or stagger them or do all kinds of other tricks, but ultimately there are only so many raindrops and no amount of bucket-rearranging can change that.

Sensors are getting better, yes, but not only is this pace too slow to keep consumers buying new phones year after year (imagine trying to sell a camera that’s 3 percent better), but phone manufacturers often use the same or similar camera stacks, so the improvements (like the recent switch to backside illumination) are shared amongst them. So no one is getting ahead on sensors alone.

Perhaps they could improve the lens? Not really. Lenses have arrived at a level of sophistication and perfection that is hard to improve on, especially at small scale. To say space is limited inside a smartphone’s camera stack is a major understatement — there’s hardly a square micron to spare. You might be able to improve them slightly as far as how much light passes through and how little distortion there is, but these are old problems that have been mostly optimized.

The only way to gather more light would be to increase the size of the lens, either by having it A: project outwards from the body; B: displace critical components within the body; or C: increase the thickness of the phone. Which of those options does Apple seem likely to find acceptable?

In retrospect it was inevitable that Apple (and Samsung, and Huawei, and others) would have to choose D: none of the above. If you can’t get more light, you just have to do more with the light you’ve got.

Isn’t all photography computational?

The broadest definition of computational photography includes just about any digital imaging at all. Unlike film, even the most basic digital camera requires computation to turn the light hitting the sensor into a usable image. And camera makers differ widely in the way they do this, producing different JPEG processing methods, RAW formats and color science.

For a long time there wasn’t much of interest on top of this basic layer, partly from a lack of processing power. Sure, there have been filters, and quick in-camera tweaks to improve contrast and color. But ultimately these just amount to automated dial-twiddling.

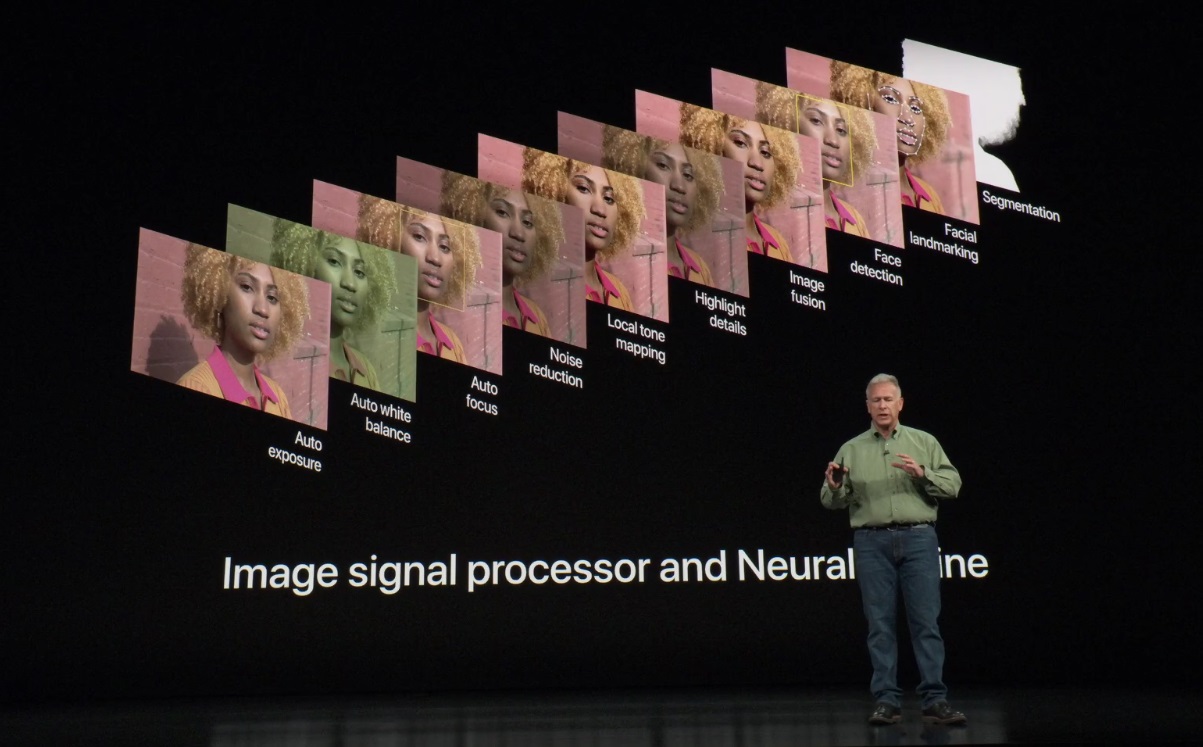

The first real computational photography features were arguably object identification and tracking for the purposes of autofocus. Face and eye tracking made it easier to capture people in complex lighting or poses, and object tracking made sports and action photography easier as the system adjusted its AF point to a target moving across the frame.

These were early examples of deriving metadata from the image and using it proactively, to improve that image or feeding forward to the next.

In DSLRs, autofocus accuracy and flexibility are marquee features, so this early use case made sense; but outside a few gimmicks, these “serious” cameras generally deployed computation in a fairly vanilla way. Faster image sensors meant faster sensor offloading and burst speeds, some extra cycles dedicated to color and detail preservation and so on. DSLRs weren’t being used for live video or augmented reality. And until fairly recently, the same was true of smartphone cameras, which were more like point and shoots than the all-purpose media tools we know them as today.

The limits of traditional imaging

Despite experimentation here and there and the occasional outlier, smartphone cameras are pretty much the same. They have to fit within a few millimeters of depth, which limits their optics to a few configurations. The size of the sensor is likewise limited — a DSLR might use an APS-C sensor 23 by 15 millimeters across, making an area of 345 mm2; the sensor in the iPhone XS, probably the largest and most advanced on the market right now, is 7 by 5.8 mm or so, for a total of 40.6 mm2.

Roughly speaking, it’s collecting an order of magnitude less light than a “normal” camera, but is expected to reconstruct a scene with roughly the same fidelity, colors and such — around the same number of megapixels, too. On its face this is sort of an impossible problem.

Improvements in the traditional sense help out — optical and electronic stabilization, for instance, make it possible to expose for longer without blurring, collecting more light. But these devices are still being asked to spin straw into gold.

Luckily, as I mentioned, everyone is pretty much in the same boat. Because of the fundamental limitations in play, there’s no way Apple or Samsung can reinvent the camera or come up with some crazy lens structure that puts them leagues ahead of the competition. They’ve all been given the same basic foundation.

All competition therefore comprises what these companies build on top of that foundation.

Image as stream

The key insight in computational photography is that an image coming from a digital camera’s sensor isn’t a snapshot, the way it is generally thought of. In traditional cameras the shutter opens and closes, exposing the light-sensitive medium for a fraction of a second. That’s not what digital cameras do, or at least not what they can do.

A camera’s sensor is constantly bombarded with light; rain is constantly falling on the field of buckets, to return to our metaphor, but when you’re not taking a picture, these buckets are bottomless and no one is checking their contents. But the rain is falling nevertheless.

To capture an image the camera system picks a point at which to start counting the raindrops, measuring the light that hits the sensor. Then it picks a point to stop. For the purposes of traditional photography, this enables nearly arbitrarily short shutter speeds, which isn’t much use to tiny sensors.

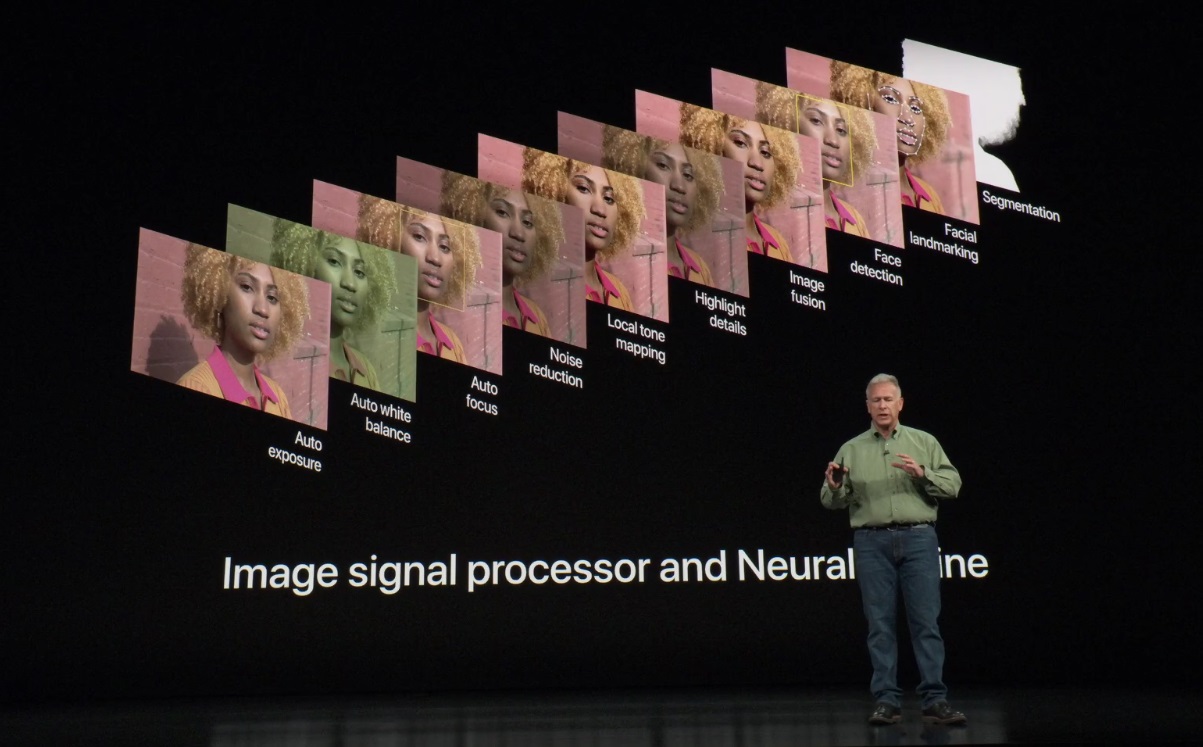

Why not just always be recording? Theoretically you could, but it would drain the battery and produce a lot of heat. Fortunately, in the last few years image processing chips have gotten efficient enough that they can, when the camera app is open, keep a certain duration of that stream — limited resolution captures of the last 60 frames, for instance. Sure, it costs a little battery, but it’s worth it.

Access to the stream allows the camera to do all kinds of things. It adds context.

Context can mean a lot of things. It can be photographic elements like the lighting and distance to subject. But it can also be motion, objects, intention.

A simple example of context is what is commonly referred to as HDR, or high dynamic range imagery. This technique uses multiple images taken in a row with different exposures to more accurately capture areas of the image that might have been underexposed or overexposed in a single exposure. The context in this case is understanding which areas those are and how to intelligently combine the images together.

This can be accomplished with exposure bracketing, a very old photographic technique, but it can be accomplished instantly and without warning if the image stream is being manipulated to produce multiple exposure ranges all the time. That’s exactly what Google and Apple now do.

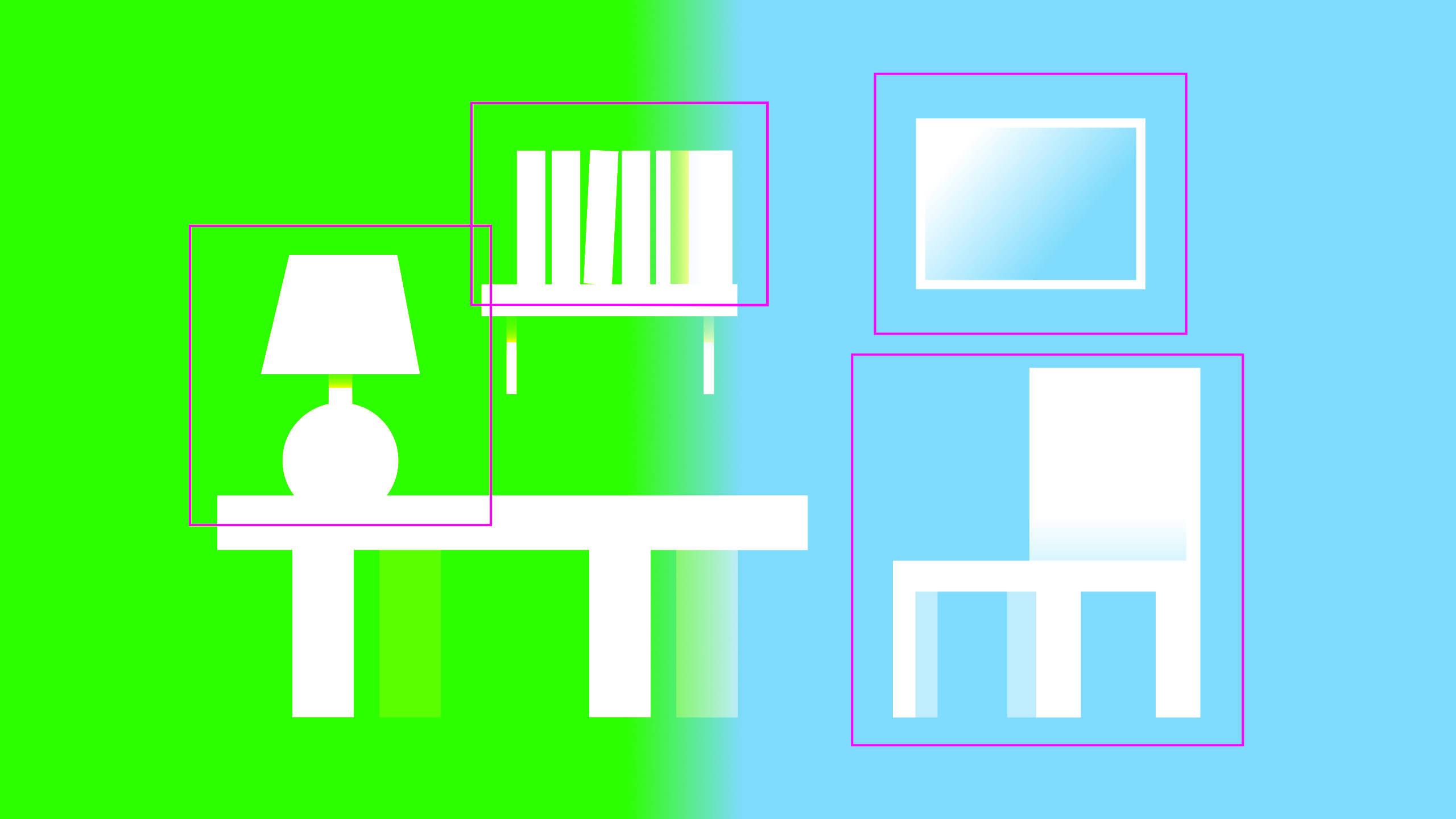

Something more complex is of course the “portrait mode” and artificial background blur or bokeh that is becoming more and more common. Context here is not simply the distance of a face, but an understanding of what parts of the image constitute a particular physical object, and the exact contours of that object. This can be derived from motion in the stream, from stereo separation in multiple cameras, and from machine learning models that have been trained to identify and delineate human shapes.

These techniques are only possible, first, because the requisite imagery has been captured from the stream in the first place (an advance in image sensor and RAM speed), and second, because companies developed highly efficient algorithms to perform these calculations, trained on enormous data sets and immense amounts of computation time.

What’s important about these techniques, however, is not simply that they can be done, but that one company may do them better than the other. And this quality is entirely a function of the software engineering work and artistic oversight that goes into them.

DxOMark did a comparison of some early artificial bokeh systems; the results, however, were somewhat unsatisfying. It was less a question of which looked better, and more of whether they failed or succeeded in applying the effect. Computational photography is in such early days that it is enough for the feature to simply work to impress people. Like a dog walking on its hind legs, we are amazed that it occurs at all.

But Apple has pulled ahead with what some would say is an almost absurdly over-engineered solution to the bokeh problem. It didn’t just learn how to replicate the effect — it used the computing power it has at its disposal to create virtual physical models of the optical phenomenon that produces it. It’s like the difference between animating a bouncing ball and simulating realistic gravity and elastic material physics.

Why go to such lengths? Because Apple knows what is becoming clear to others: that it is absurd to worry about the limits of computational capability at all. There are limits to how well an optical phenomenon can be replicated if you are taking shortcuts like Gaussian blurring. There are no limits to how well it can be replicated if you simulate it at the level of the photon.

Similarly the idea of combining five, 10, or 100 images into a single HDR image seems absurd, but the truth is that in photography, more information is almost always better. If the cost of these computational acrobatics is negligible and the results measurable, why shouldn’t our devices be performing these calculations? In a few years they too will seem ordinary.

If the result is a better product, the computational power and engineering ability has been deployed with success; just as Leica or Canon might spend millions to eke fractional performance improvements out of a stable optical system like a $2,000 zoom lens, Apple and others are spending money where they can create value: not in glass, but in silicon.

Double vision

One trend that may appear to conflict with the computational photography narrative I’ve described is the advent of systems comprising multiple cameras.

This technique doesn’t add more light to the sensor — that would be prohibitively complex and expensive optically, and probably wouldn’t work anyway. But if you can free up a little space lengthwise (rather than depthwise, which we found impractical) you can put a whole separate camera right by the first that captures photos extremely similar to those taken by the first.

A mock-up of what a line of color iPhones could look like

Now, if all you want to do is re-enact Wayne’s World at an imperceptible scale (camera one, camera two… camera one, camera two…) that’s all you need. But no one actually wants to take two images simultaneously, a fraction of an inch apart.

These two cameras operate either independently (as wide-angle and zoom) or one is used to augment the other, forming a single system with multiple inputs.

The thing is that taking the data from one camera and using it to enhance the data from another is — you guessed it — extremely computationally intensive. It’s like the HDR problem of multiple exposures, except far more complex as the images aren’t taken with the same lens and sensor. It can be optimized, but that doesn’t make it easy.

So although adding a second camera is indeed a way to improve the imaging system by physical means, the possibility only exists because of the state of computational photography. And it is the quality of that computational imagery that results in a better photograph — or doesn’t. The Light camera with its 16 sensors and lenses is an example of an ambitious effort that simply didn’t produce better images, though it was using established computational photography techniques to harvest and winnow an even larger collection of images.

Light and code

The future of photography is computational, not optical. This is a massive shift in paradigm and one that every company that makes or uses cameras is currently grappling with. There will be repercussions in traditional cameras like SLRs (rapidly giving way to mirrorless systems), in phones, in embedded devices and everywhere that light is captured and turned into images.

Sometimes this means that the cameras we hear about will be much the same as last year’s, as far as megapixel counts, ISO ranges, f-numbers and so on. That’s okay. With some exceptions these have gotten as good as we can reasonably expect them to be: Glass isn’t getting any clearer, and our vision isn’t getting any more acute. The way light moves through our devices and eyeballs isn’t likely to change much.

What those devices do with that light, however, is changing at an incredible rate. This will produce features that sound ridiculous, or pseudoscience babble on stage, or drained batteries. That’s okay, too. Just as we have experimented with other parts of the camera for the last century and brought them to varying levels of perfection, we have moved onto a new, non-physical “part” which nonetheless has a very important effect on the quality and even possibility of the images we take.

The sheer number of Lime scooters in Santa Monica where the store will arise is already staggering. Supply doesn’t seem to be bottleneck as it is in some other cities. Instead, it’s the fierce competition from hometown startups like local favorite Bird that Lime wants to overcome through brick-and-mortar marketing. Often times you’ll see scooters from Lime and

The sheer number of Lime scooters in Santa Monica where the store will arise is already staggering. Supply doesn’t seem to be bottleneck as it is in some other cities. Instead, it’s the fierce competition from hometown startups like local favorite Bird that Lime wants to overcome through brick-and-mortar marketing. Often times you’ll see scooters from Lime and