Year after year, phishing remains one of the most popular and effective ways for attackers to steal your passwords. As users, we’re mostly trained to spot the telltale signs of a phishing site, but most of us rely on carefully examining the web address in the browser’s address bar to make sure the site is legitimate.

But even the browser’s anti-phishing features — often the last line of defense for a would-be phishing victim — aren’t perfect.

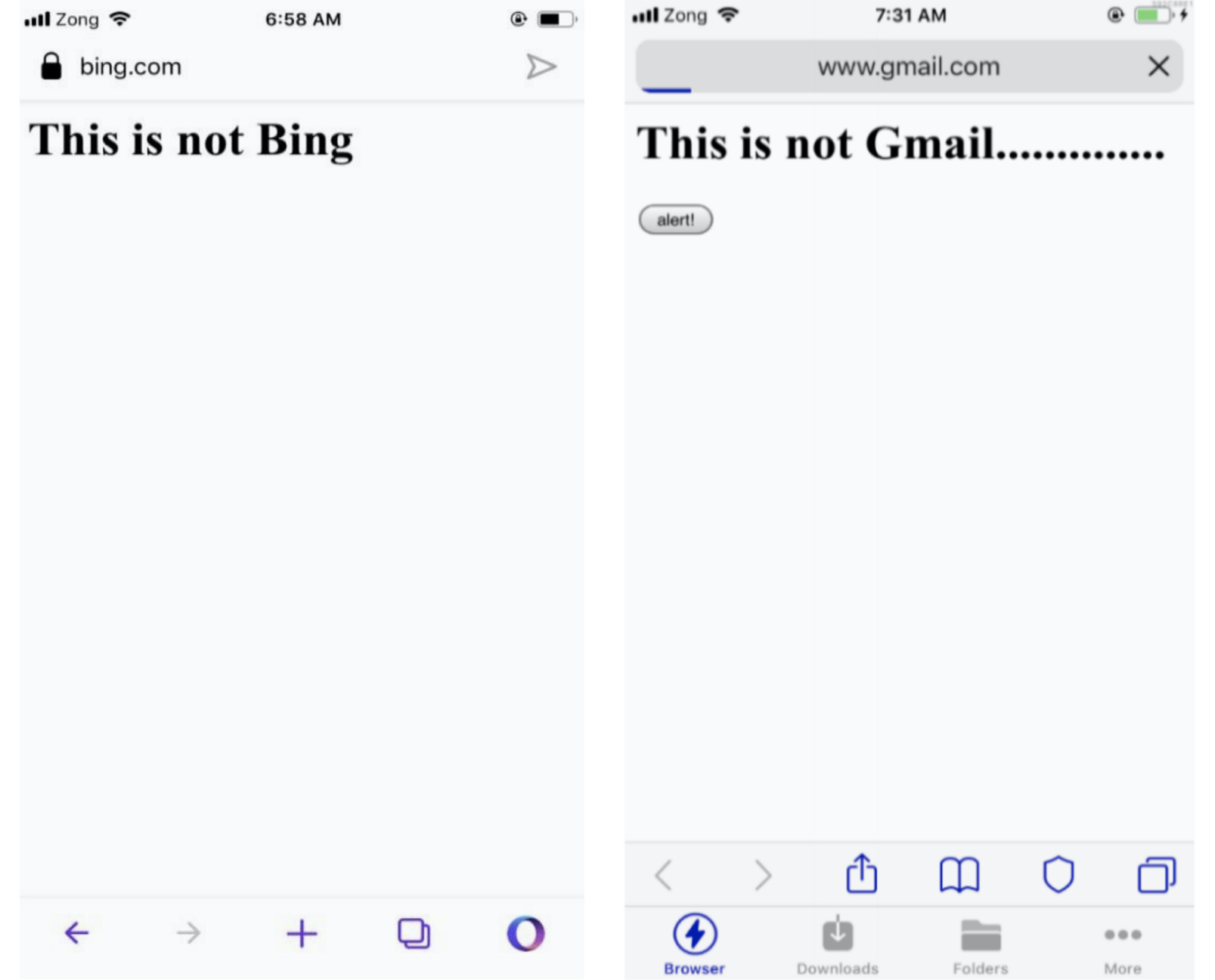

Security researcher Rafay Baloch found several vulnerabilities in some of the most widely used mobile browsers — including Apple’s Safari, Opera, and Yandex — which if exploited would allow an attacker to trick the browser into displaying a different web address than the actual website that the user is on. These address bar spoofing bugs make it far easier for attackers to make their phishing pages look like legitimate websites, creating the perfect conditions for someone trying to steal passwords.

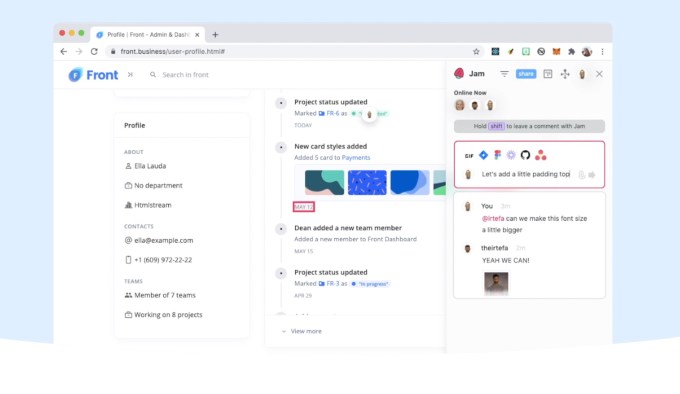

The bugs worked by exploiting a weakness in the time it takes for a vulnerable browser to load a web page. Once a victim is tricked into opening a link from a phishing email or text message, the malicious web page uses code hidden on the page to effectively replace the malicious web address in the browser’s address bar to any other web address that the attacker chooses.

In at least one case, the vulnerable browser retained the green padlock icon, indicating that the malicious web page with a spoofed web address was legitimate — when it wasn’t.

An address bar spoofing bug in Opera Touch for iOS (left) and Bolt Browser (right). These spoofing bugs can make phishing emails look far more convincing. (Image: Rapid7/supplied)

Rapid7’s research director Tod Beardsley, who helped Baloch with disclosing the vulnerabilities to each browser maker, said address bar spoofing attacks put mobile users at particular risk.

“On mobile, space is at an absolute premium, so every fraction of an inch counts. As a result, there’s not a lot of space available for security signals and sigils,” Beardsley told TechCrunch. “While on a desktop browser, you can either look at the link you’re on, mouse over a link to see where you’re going, or even click on the lock to get certificate details. These extra sources don’t really exist on mobile, so the location bar not only tells the user what site they’re on, it’s expected to tell the user this unambiguously and with certainty. If you’re on palpay.com instead of the expected paypal.com, you could notice this and know you’re on a fake site before you type in your password.”

“Spoofing attacks like this make the location bar ambiguous, and thus, allow an attacker to generate some credence and trustworthiness to their fake site,” he said.

Baloch and Beardsley said the browser makers responded with mixed results.

So far, only Apple and Yandex pushed out fixes in September and October. Opera spokesperson Julia Szyndzielorz said the fixes for its Opera Touch and Opera Mini browsers are “in gradual rollout.”

But the makers of UC Browser, Bolt Browser, and RITS Browser — which collectively have more than 600 million device installs — did not respond to the researchers and left the vulnerabilities unpatched.

TechCrunch reached out to each browser maker but none provided a statement by the time of publication.