Reddit has published its transparency report for 2020, showing various numbers relating to removed content, government requests and other administrative actions. The largest problem by far — in terms of volume, anyway — is spam, which made up nearly all content taken down. Legal requests for content takedown and user information were far fewer, but not trivial, in number.

The full report is quite readable, but a bit long; the main points to understand are summarized below.

Of nearly 3.4 billion pieces of content created on Reddit (which is to say posts, comments, hosted images, etc.), 233 million were removed. These numbers are both up by 20%-30% from 2019. Of those 233 million, 131 million were “proactive” removals by the AutoMod system and 13.6 million were removed after user reports by subreddit moderators.

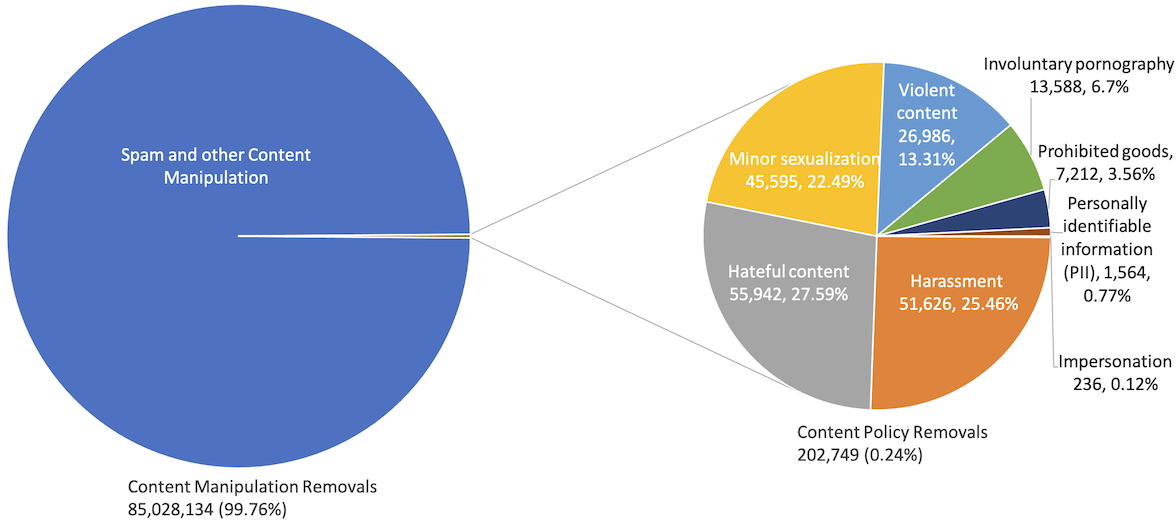

The remaining 85 million were taken down by Reddit admins; 99.76% of these were spam or “content manipulation” like brigading and astroturfing, with around 50,000 each of harassment, hate and sexualization of minors, smaller amounts of violent speech, doxing and so on.

82,858 subreddits were removed, nearly four times more than 2019. The majority of these were for lack of moderation, followed by hate, harassment and ban evasion (e.g., r/bannedsub starts r/bannedsub2).

When it came to removing comments, hate, violence and harassment were much more prevalent. And 92% of private messages removed (of about 25,000 total) were for harassment.

Outside of spam and content manipulation, hate speech resulted in far more bans than any other infraction; more accounts were permanently banned for hate in 2020 than for all causes combined in 2019. (But far fewer for content violations than for spam and ban evasion.)

Government requests to remove content were relatively few. Overall Reddit received a couple hundred requests covering about 5,000 pieces of content or subreddits. For example, 753 subreddits had their access restricted to Pakistani users due to anti-obscenity laws there.

Requests from individuals or companies to remove things numbered in the hundreds, and copyright takedown notices asked for about half a million pieces of content to be removed (375,774 were), more than twice 2019’s. Only a handful of DMCA counter-notices were received.

Law enforcement came to Reddit 611 times for user information, up 50% from last year, and the company granted 424 of those requests. These are mostly subpoenas, court orders and search warrants. Since Reddit isn’t really a social network and accounts can be essentially anonymous or throwaway, it’s hard to say what level of disclosure this actually represents. Emergency disclosure requests numbered about 300 and were mostly complied with — these are supposedly life-or-death situations in which a Reddit account is concerned.

Lastly Reddit received somewhere between 0 and 249 secret requests for data, targeting somewhere between 0 and 249 users, same as last year. Sadly, federal law prohibits them from saying any more than this regarding FISA orders and National Security Letters.

Overall the picture painted of Reddit in 2020 is of a growing community plagued by spam and inauthentic activity, plus a significant and growing contingent of hate, harassment and other prohibited content (though last year was surely an exceptional one for this). Lacking much fundamental access to or use of personally identifiable data, Reddit isn’t much of a target for three-letter agencies and law enforcement. And with “free speech”-focused alternatives to Reddit and other platforms popping up, it’s likely that the hate and harassment that were deplatformed will roost elsewhere in 2021.