At a Senate hearing this week in which US lawmakers quizzed tech giants on how they should go about drawing up comprehensive Federal consumer privacy protection legislation, Apple’s VP of software technology described privacy as a “core value” for the company.

“We want your device to know everything about you but we don’t think we should,” Bud Tribble told them in his opening remarks.

Facebook was not at the commerce committee hearing which, as well as Apple, included reps from Amazon, AT&T, Charter Communications, Google and Twitter.

But the company could hardly have made such a claim had it been in the room, given that its business is based on trying to know everything about you in order to dart you with ads.

You could say Facebook has ‘hostility to privacy‘ as a core value.

Earlier this year one US senator wondered of Mark Zuckerberg how Facebook could run its service given it doesn’t charge users for access. “Senator we run ads,” was the almost startled response, as if the Facebook founder couldn’t believe his luck at the not-even-surface-level political probing his platform was getting.

But there have been tougher moments of scrutiny for Zuckerberg and his company in 2018, as public awareness about how people’s data is being ceaselessly sucked out of platforms and passed around in the background, as fuel for a certain slice of the digital economy, has grown and grown — fuelled by a steady parade of data breaches and privacy scandals which provide a glimpse behind the curtain.

On the data scandal front Facebook has reigned supreme, whether it’s as an ‘oops we just didn’t think of that’ spreader of socially divisive ads paid for by Kremlin agents (sometimes with roubles!); or as a carefree host for third party apps to party at its users’ expense by silently hovering up info on their friends, in the multi-millions.

Facebook’s response to the Cambridge Analytica debacle was to loudly claim it was ‘locking the platform down‘. And try to paint everyone else as the rogue data sucker — to avoid the obvious and awkward fact that its own business functions in much the same way.

All this scandalabra has kept Facebook execs very busy with year, with policy staffers and execs being grilled by lawmakers on an increasing number of fronts and issues — from election interference and data misuse, to ad transparency, hate speech and abuse, and also directly, and at times closely, on consumer privacy and control.

Facebook shielded its founder from one sought for grilling on data misuse, as UK MPs investigated online disinformation vs democracy, as well as examining wider issues around consumer control and privacy. (They’ve since recommended a social media levy to safeguard society from platform power.)

The DCMS committee wanted Zuckerberg to testify to unpick how Facebook’s platform contributes to the spread of disinformation online. The company sent various reps to face questions (including its CTO) — but never the founder (not even via video link). And committee chair Damian Collins was withering and public in his criticism of Facebook sidestepping close questioning — saying the company had displayed a “pattern” of uncooperative behaviour, and “an unwillingness to engage, and a desire to hold onto information and not disclose it.”

As a result, Zuckerberg’s tally of public appearances before lawmakers this year stands at just two domestic hearings, in the US Senate and Congress, and one at a meeting of the EU parliament’s conference of presidents (which switched from a behind closed doors format to being streamed online after a revolt by parliamentarians) — and where he was heckled by MEPs for avoiding their questions.

But three sessions in a handful of months is still a lot more political grillings than Zuckerberg has ever faced before.

He’s going to need to get used to awkward questions now that lawmakers have woken up to the power and risk of his platform.

Security, weaponized

What has become increasingly clear from the growing sound and fury over privacy and Facebook (and Facebook and privacy), is that a key plank of the company’s strategy to fight against the rise of consumer privacy as a mainstream concern is misdirection and cynical exploitation of valid security concerns.

Simply put, Facebook is weaponizing security to shield its erosion of privacy.

Privacy legislation is perhaps the only thing that could pose an existential threat to a business that’s entirely powered by watching and recording what people do at vast scale. And relying on that scale (and its own dark pattern design) to manipulate consent flows to acquire the private data it needs to profit.

Only robust privacy laws could bring Facebook’s self-serving house of cards tumbling down. User growth on its main service isn’t what it was but the company has shown itself very adept at picking up (and picking off) potential competitors — applying its surveillance practices to crushing competition too.

In Europe lawmakers have already tightened privacy oversight on digital businesses and massively beefed up penalties for data misuse. Under the region’s new GDPR framework compliance violations can attract fines as high as 4% of a company’s global annual turnover.

Which would mean billions of dollars in Facebook’s case — vs the pinprick penalties it has been dealing with for data abuse up to now.

Though fines aren’t the real point; if Facebook is forced to change its processes, so how it harvests and mines people’s data, that could knock a major, major hole right through its profit-center.

Hence the existential nature of the threat.

The GDPR came into force in May and multiple investigations are already underway. This summer the EU’s data protection supervisor, Giovanni Buttarelli, told the Washington Post to expect the first results by the end of the year.

Which means 2018 could result in some very well known tech giants being hit with major fines. And — more interestingly — being forced to change how they approach privacy.

One target for GDPR complainants is so-called ‘forced consent‘ — where consumers are told by platforms leveraging powerful network effects that they must accept giving up their privacy as the ‘take it or leave it’ price of accessing the service. Which doesn’t exactly smell like the ‘free choice’ EU law actually requires.

It’s not just Europe, either. Regulators across the globe are paying greater attention than ever to the use and abuse of people’s data. And also, therefore, to Facebook’s business — which profits, so very handsomely, by exploiting privacy to build profiles on literally billions of people in order to dart them with ads.

US lawmakers are now directly asking tech firms whether they should implement GDPR style legislation at home.

Unsurprisingly, tech giants are not at all keen — arguing, as they did at this week’s hearing, for the need to “balance” individual privacy rights against “freedom to innovate”.

So a lobbying joint-front to try to water down any US privacy clampdown is in full effect. (Though also asked this week whether they would leave Europe or California as a result of tougher-than-they’d-like privacy laws none of the tech giants said they would.)

The state of California passed its own robust privacy law, the California Consumer Privacy Act, this summer, which is due to come into force in 2020. And the tech industry is not a fan. So its engagement with federal lawmakers now is a clear attempt to secure a weaker federal framework to ride over any more stringent state laws.

Europe and its GDPR obviously can’t be rolled over like that, though. Even as tech giants like Facebook have certainly been seeing how much they can get away with — to force a expensive and time-consuming legal fight.

While ‘innovation’ is one oft-trotted angle tech firms use to argue against consumer privacy protections, Facebook included, the company has another tactic too: Deploying the ‘S’ word — security — both to fend off increasingly tricky questions from lawmakers, as they finally get up to speed and start to grapple with what it’s actually doing; and — more broadly — to keep its people-mining, ad-targeting business steamrollering on by greasing the pipe that keeps the personal data flowing in.

In recent years multiple major data misuse scandals have undoubtedly raised consumer awareness about privacy, and put greater emphasis on the value of robustly securing personal data. Scandals that even seem to have begun to impact how some Facebook users Facebook. So the risks for its business are clear.

Part of its strategic response, then, looks like an attempt to collapse the distinction between security and privacy — by using security concerns to shield privacy hostile practices from critical scrutiny, specifically by chain-linking its data-harvesting activities to some vaguely invoked “security purposes”, whether that’s security for all Facebook users against malicious non-users trying to hack them; or, wider still, for every engaged citizen who wants democracy to be protected from fake accounts spreading malicious propaganda.

So the game Facebook is here playing is to use security as a very broad-brush to try to defang legislation that could radically shrink its access to people’s data.

Here, for example, is Zuckerberg responding to a question from an MEP in the EU parliament asking for answers on so-called ‘shadow profiles’ (aka the personal data the company collects on non-users) — emphasis mine:

It’s very important that we don’t have people who aren’t Facebook users that are coming to our service and trying to scrape the public data that’s available. And one of the ways that we do that is people use our service and even if they’re not signed in we need to understand how they’re using the service to prevent bad activity.

At this point in the meeting Zuckerberg also suggestively referenced MEPs’ concerns about election interference — to better play on a security fear that’s inexorably close to their hearts. (With the spectre of re-election looming next spring.) So he’s making good use of his psychology major.

“On the security side we think it’s important to keep it to protect people in our community,” he also said when pressed by MEPs to answer how a person who isn’t a Facebook user could delete its shadow profile of them.

He was also questioned about shadow profiles by the House Energy and Commerce Committee in April. And used the same security justification for harvesting data on people who aren’t Facebook users.

“Congressman, in general we collect data on people who have not signed up for Facebook for security purposes to prevent the kind of scraping you were just referring to [reverse searches based on public info like phone numbers],” he said. “In order to prevent people from scraping public information… we need to know when someone is repeatedly trying to access our services.”

He claimed not to know “off the top of my head” how many data points Facebook holds on non-users (nor even on users, which the congressman had also asked for, for comparative purposes).

These sorts of exchanges are very telling because for years Facebook has relied upon people not knowing or really understanding how its platform works to keep what are clearly ethically questionable practices from closer scrutiny.

But, as political attention has dialled up around privacy, and its become harder for the company to simply deny or fog what it’s actually doing, Facebook appears to be evolving its defence strategy — by defiantly arguing it simply must profile everyone, including non-users, for user security.

No matter this is the same company which, despite maintaining all those shadow profiles on its servers, famously failed to spot Kremlin election interference going on at massive scale in its own back yard — and thus failed to protect its users from malicious propaganda.

TechCrunch/Bryce Durbin

Nor was Facebook capable of preventing its platform from being repurposed as a conduit for accelerating ethnic hate in a country such as Myanmar — with some truly tragic consequences. Yet it must, presumably, hold shadow profiles on non-users there too. Yet was seemingly unable (or unwilling) to use that intelligence to help protect actual lives…

So when Zuckerberg invokes overarching “security purposes” as a justification for violating people’s privacy en masse it pays to ask critical questions about what kind of security it’s actually purporting to be able deliver. Beyond, y’know, continued security for its own business model as it comes under increasing attack.

What Facebook indisputably does do with ‘shadow contact information’, acquired about people via other means than the person themselves handing it over, is to use it to target people with ads. So it uses intelligence harvested without consent to make money.

Facebook confirmed as much this week, when Gizmodo asked it to respond to a study by some US academics that showed how a piece of personal data that had never been knowingly provided to Facebook by its owner could still be used to target an ad at that person.

Responding to the study, Facebook admitted it was “likely” the academic had been shown the ad “because someone else uploaded his contact information via contact importer”.

“People own their address books. We understand that in some cases this may mean that another person may not be able to control the contact information someone else uploads about them,” it told Gizmodo.

So essentially Facebook has finally admitted that consentless scraped contact information is a core part of its ad targeting apparatus.

Safe to say, that’s not going to play at all well in Europe.

Basically Facebook is saying you own and control your personal data until it can acquire it from someone else — and then, er, nope!

Yet given the reach of its network, the chances of your data not sitting on its servers somewhere seems very, very slim. So Facebook is essentially invading the privacy of pretty much everyone in the world who has ever used a mobile phone. (Something like two-thirds of the global population then.)

In other contexts this would be called spying — or, well, ‘mass surveillance’.

It’s also how Facebook makes money.

And yet when called in front of lawmakers to asking about the ethics of spying on the majority of the people on the planet, the company seeks to justify this supermassive privacy intrusion by suggesting that gathering data about every phone user without their consent is necessary for some fuzzily-defined “security purposes” — even as its own record on security really isn’t looking so shiny these days.

WASHINGTON, DC – APRIL 11: Facebook co-founder, Chairman and CEO Mark Zuckerberg prepares to testify before the House Energy and Commerce Committee in the Rayburn House Office Building on Capitol Hill April 11, 2018 in Washington, DC. This is the second day of testimony before Congress by Zuckerberg, 33, after it was reported that 87 million Facebook users had their personal information harvested by Cambridge Analytica, a British political consulting firm linked to the Trump campaign. (Photo by Chip Somodevilla/Getty Images)

It’s as if Facebook is trying to lift a page out of national intelligence agency playbooks — when governments claim ‘mass surveillance’ of populations is necessary for security purposes like counterterrorism.

Except Facebook is a commercial company, not the NSA.

So it’s only fighting to keep being able to carpet-bomb the planet with ads.

Profiting from shadow profiles

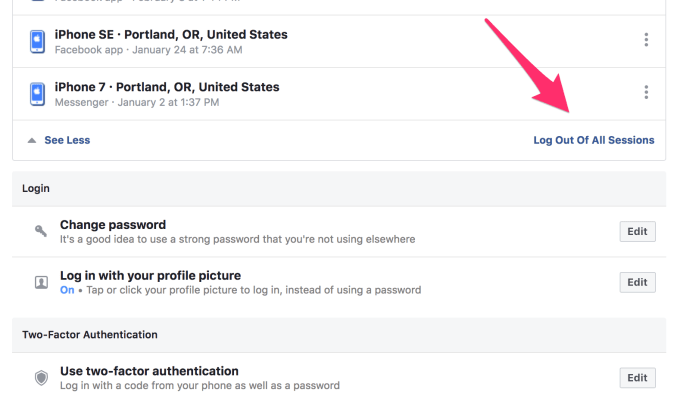

Another example of Facebook weaponizing security to erode privacy was also confirmed via Gizmodo’s reportage. The same academics found the company uses phone numbers provided to it by users for the specific (security) purpose of enabling two-factor authentication, which is a technique intended to make it harder for a hacker to take over an account, to also target them with ads.

In a nutshell, Facebook is exploiting its users’ valid security fears about being hacked in order to make itself more money.

Any security expert worth their salt will have spent long years encouraging web users to turn on two factor authentication for as many of their accounts as possible in order to reduce the risk of being hacked. So Facebook exploiting that security vector to boost its profits is truly awful. Because it works against those valiant infosec efforts — so risks eroding users’ security as well as trampling all over their privacy.

It’s just a double whammy of awful, awful behavior.

And of course, there’s more.

A third example of how Facebook seeks to play on people’s security fears to enable deeper privacy intrusion comes by way of the recent rollout of its facial recognition technology in Europe.

In this region the company had previously been forced to pull the plug on facial recognition after being leaned on by privacy conscious regulators. But after having to redesign its consent flows to come up with its version of ‘GDPR compliance’ in time for May 25, Facebook used this opportunity to revisit a rollout of the technology on Europeans — by asking users there to consent to switching it on.

Now you might think that asking for consent sounds okay on the surface. But it pays to remember that Facebook is a master of dark pattern design.

Which means it’s expert at extracting outcomes from people by applying these manipulative dark arts. (Don’t forget, it has even directly experimented in manipulating users’ emotions.)

So can it be a free consent if ‘individual choice’ is set against a powerful technology platform that’s both in charge of the consent wording, button placement and button design, and which can also data-mine the behavior of its 2BN+ users to further inform and tweak (via A/B testing) the design of the aforementioned ‘consent flow’? (Or, to put it another way, is it still ‘yes’ if the tiny greyscale ‘no’ button fades away when your cursor approaches while the big ‘YES’ button pops and blinks suggestively?)

In the case of facial recognition, Facebook used a manipulative consent flow that included a couple of self-serving ‘examples’ — selling the ‘benefits’ of the technology to users before they landed on the screen where they could choose either yes switch it on, or no leave it off.

One of which explicitly played on people’s security fears — by suggesting that without the technology enabled users were at risk of being impersonated by strangers. Whereas, by agreeing to do what Facebook wanted you to do, Facebook said it would help “protect you from a stranger using your photo to impersonate you”…

That example shows the company is not above actively jerking on the chain of people’s security fears, as well as passively exploiting similar security worries when it jerkily repurposes 2FA digits for ad targeting.

There’s even more too; Facebook has been positioning itself to pull off what is arguably the greatest (in the ‘largest’ sense of the word) appropriation of security concerns yet to shield its behind-the-scenes trampling of user privacy — when, from next year, it will begin injecting ads into the WhatsApp messaging platform.

These will be targeted ads, because Facebook has already changed the WhatsApp T&Cs to link Facebook and WhatsApp accounts — via phone number matching and other technical means that enable it to connect distinct accounts across two otherwise entirely separate social services.

Thing is, WhatsApp got fat on its founders promise of 100% ad-free messaging. The founders were also privacy and security champions, pushing to roll e2e encryption right across the platform — even after selling their app to the adtech giant in 2014.

WhatsApp’s robust e2e encryption means Facebook literally cannot read the messages users are sending each other. But that does not mean Facebook is respecting WhatsApp users’ privacy.

On the contrary; The company has given itself broader rights to user data by changing the WhatsApp T&Cs and by matching accounts.

So, really, it’s all just one big Facebook profile now — whichever of its products you do (or don’t) use.

This means that even without literally reading your WhatsApps, Facebook can still know plenty about a WhatsApp user, thanks to any other Facebook Group profiles they have ever had and any shadow profiles it maintains in parallel. WhatsApp users will soon become 1.5BN+ bullseyes for yet more creepily intrusive Facebook ads to seek their target.

No private spaces, then, in Facebook’s empire as the company capitalizes on people’s fears to shift the debate away from personal privacy and onto the self-serving notion of ‘secured by Facebook spaces’ — in order that it can keep sucking up people’s personal data.

Yet this is a very dangerous strategy, though.

Because if Facebook can’t even deliver security for its users, thereby undermining those “security purposes” it keeps banging on about, it might find it difficult to sell the world on going naked just so Facebook Inc can keep turning a profit.

What’s the best security practice of all? That’s super simple: Not holding data in the first place.