It’s true, you’ve got the Galaxy Note to thank for your big phone. When the device hit the scene at IFA 2011, large screens were still a punchline. That same year, Steve Jobs famously joked about phones with screens larger than four inches, telling a crowd of reporters, “nobody’s going to buy that.”

In 2019, the average screen size hovers around 5.5 inches. That’s a touch larger than the original Note’s 5.3 inches — a size that was pretty widely mocked by much of the industry press at the time. Of course, much of the mainstreaming of larger phones comes courtesy of a much improved screen to body ratio, another place where Samsung has continued to lead the way.

In some sense, the Note has been doomed by its own success. As the rest of the industry caught up, the line blended into the background. Samsung didn’t do the product any favors by dropping the pretense of distinction between the Note and its Galaxy S line.

Ultimately, the two products served as an opportunity to have a six-month refresh cycle for its flagships. Samsung, of course, has been hit with the same sort of malaise as the rest of the industry. The smartphone market isn’t the unstoppable machine it appeared to be two or three years back.

Like the rest of the industry, the company painted itself into a corner with the smartphone race, creating flagships good enough to convince users to hold onto them for an extra year or two, greatly slowing the upgrade cycle in the process. Ever-inflating prices have also been a part of smartphone sales stagnation — something Samsung and the Note are as guilty of as any.

So what’s a poor smartphone manufacturer to do? The Note 10 represents baby steps. As it did with the S line recently, Samsung is now offering two models. The base Note 10 represents a rare step backward in terms of screen size, shrinking down slightly from 6.4 to 6.3 inches, while reducing resolution from Quad HD to Full HD.

The seemingly regressive step lets Samsung come in a bit under last year’s jaw dropping $1,000. The new Note is only $50 cheaper, but moving from four to three figures may have a positive psychological effect for wary buyers. While the slightly smaller screen coupled with a better screen to body ratio means a device that’s surprisingly slim.

If anything, the Note 10+ feels like the true successor to the Note line. The baseline device could have just as well been labeled the Note 10 Lite. That’s something Samsung is keenly aware of, as it targets first-time Note users with the 10 and true believers with the 10+. In both cases, Samsung is faced with the same task as the rest of the industry: offering a compelling reason for users to upgrade.

Earlier this week, a Note 9 owner asked me whether the new device warrants an upgrade. The answer is, of course, no. The pace of smartphone innovation has slowed, even as prices have risen. Honestly, the 10 doesn’t really offer that many compelling reasons to upgrade from the Note 8.

That’s not a slight against Samsung or the Note, per se. If anything, it’s a reflection on the fact that these phones are quite good — and have been for a while. Anecdotally, industry excitement around these devices has been tapering for a while now, and the device’s launch in the midst of the doldrums of August likely didn’t help much.

[gallery ids="1865978,1865980,1865979,1865983,1865982,1865990,1866000,1866005,1866004"]

The past few years have seen smartphones transform from coveted, bleeding-edge luxury to necessity. The good news to that end, however, is that the Note continues to be among the best devices out there.

The common refrain in the earliest days of the phablet was the inability to wrap one’s fingers around the device. It’s a pragmatic issue. Certainly you don’t want to use a phone day to day that’s impossible to hold. But Samsung’s remarkable job of improving screen to body ratio continues here. In fact, the 6.8-inch Note 10+ has roughly the same footprint as the 6.4-inch Note 9.

The issue will still persist for those with smaller hands — though thankfully Samsung’s got a solution for them in the Note 10. For the rest of us, the Note 10+ is easily held in one hand and slipped in and out of pants pockets. I realize these seem like weird things to say at this point, but I assure you they were legitimate concerns in the earliest days of the phablet, when these things were giant hunks of plastic and glass.

Samsung’s curved display once again does much of the heavy lifting here, allowing the screen to stretch nearly from side to side with only a little bezel at the edge. Up top is a hole-punch camera — that’s “Infinity O” to you. Those with keen eyes no doubt immediately noticed that Samsung has dropped the dual selfie camera here, moving toward the more popular hole-punch camera.

The company’s reasoning for this was both aesthetic and, apparently, practical. The company moved back down to a single camera for the front (10 megapixel), using similar reasoning as Google’s single rear-facing camera on the Pixel: software has greatly improved what companies can do with a single lens. That’s certainly the case to a degree, and a strong case can be made for the selfie camera, which we generally require less of than the rear-facing array.

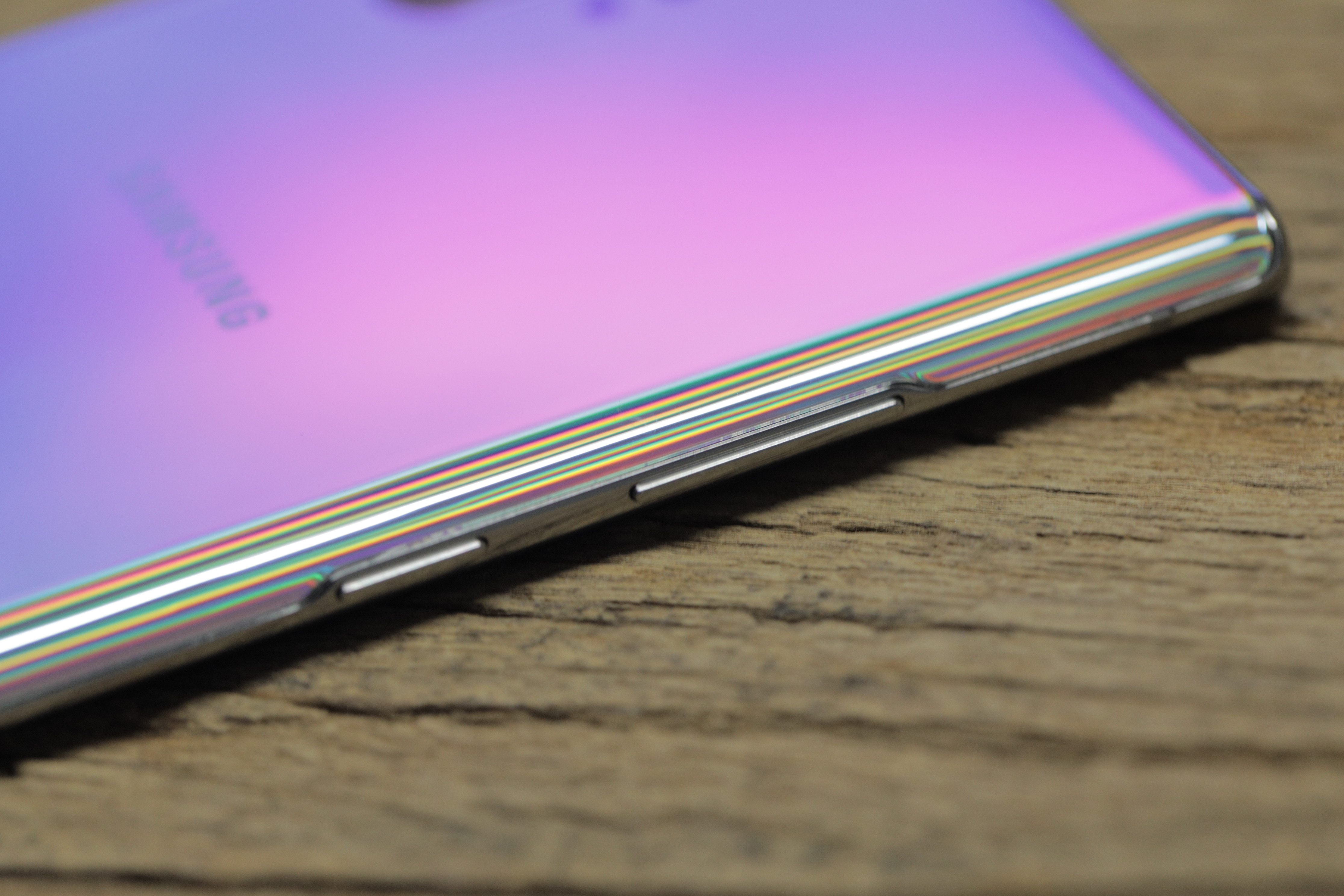

The company’s gone increasingly minimalist with the design language — something I appreciate. Over the years, as the smartphone has increasingly become a day to day utility, the product’s design has increasingly gotten out of its own way. The front and back are both made of a curved Gorilla Glass that butts up against a thin metal form with a total thickness of 7.9 millimeters.

On certain smooth surfaces like glass, you’ll occasionally find the device gliding slightly. I’d say the chances of dropping it are pretty decent with its frictionless design language, so you’re going to want to get a case for your $1,000 phone. Before you do, admire that color scheme on the back. There are four choices in all. Like the rest of the press, we ended up with Aura Glow.

It features a lovely, prismatic effect when light hits it. It’s proven a bit tricky to photograph, honestly. It’s also a fingerprint magnet, but these are the prices we pay to have the prettiest phone on the block.

One of the interesting footnotes here is how much the design of the 10 will be defined by what the device lost. There are two missing pieces here — both of which are a kind of concession from Samsung for different reasons. And for different reasons, both feel inevitable.

The headphone jack is, of course, the biggie. Samsung kicked and screamed on that one, holding onto the 3.5mm with dear life and roundly mocking the competition (read: Apple) at every turn. The company must have known it was a matter of time, even before the iPhone dropped the port three years ago.

Samsung glossed over the end of the jack (and apparently unlisted its Apple-mocking ads in the process) during the Note’s launch event. It was a stark contrast from a briefing we got around the device’s announcement, where the company’s reps spent significantly more time justifying the move. They know us well enough to know that we’d spend a little time taking the piss out of the company after three years of it making the once ubiquitous port a feature. All’s fair in love and port. And honestly, it was mostly just some good-natured ribbing. Welcome to the club, Samsung.

As for why Samsung did it now, the answer seems to be two-fold. The first is a kind of critical mass in Bluetooth headset usage. Allow me to quote myself from a few weeks back:

The tipping point, it says, came when its internal metrics showed that a majority of users on its flagship devices (the S and Note lines) moved to Bluetooth streaming. The company says the number is now in excess of 70% of users.

Also, as we’re all abundantly aware, the company put its big battery ambitions on hold for a bit, as it dealt with…more burning problems. A couple of recalls, a humble press release and an eight-point battery check later, and batteries are getting bigger again. There’s a 3,500mAh on the Note 10 and a 4,300mAh on the 10+. I’m happy to report that the latter got me through a full day plus three hours on a charge. Not bad, given all of the music and videos I subjected it to in that time.

There’s no USB-C dongle in-box. The rumors got that one wrong. You can pick up a Samsung-branded adapter for $15, or get one for much cheaper elsewhere. There is, however, a pair of AKG USB-C headphones in-box. I’ve said this before and I’ll say it again: Samsung doesn’t get enough credit for its free headphones. I’ve been known to use the pairs with other devices. They’re not the greatest the world, but they’re better sounding and more comfortable than what a lot of other companies offer in-box.

Obviously the standard no headphone jack things apply here. You can’t use the wired headphones and charge at the same time (unless you go wireless). You know the deal.

The other missing piece here is the Bixby button. I’m sure there are a handful of folks out there who will bemoan its loss, but that’s almost certainly a minority of the minority here. Since the button was first introduced, folks were asking for the ability to remap it. Samsung finally relented on that front, and with the Note 10, it drops the button altogether.

Thus far the smart assistant has been a disappointment. That’s due in no small part to a late launch compared to the likes of Siri, Alexa and Assistant, coupled with a general lack of capability at launch. In Samsung’s defense, the company’s been working to fix that with some pretty massive investment and a big push to court developers. There’s hope for Bixby yet, but a majority of users weren’t eager to have the assistant thrust upon them.

Instead, the power button has been shifted to the left of the device, just under the volume rocker. I preferred having it on the other side, especially for certain functions like screenshotting (something, granted, I do much more than the average user when reviewing a phone). That’s a pretty small quibble, of course.

Bixby can now be quickly accessed by holding down the power button. Handily, Samsung still lets you reassign the function there, if you really want Bixby out of your life. You can also hold down to get the power off menu or double press to launch Bixby or a third-party app (I opted for Spotify, probably my most used these days), though not a different assistant.

Imaging, meanwhile, is something Samsung’s been doing for a long time. The past several generations of S and Note devices have had great camera systems, and it continues to be the main point of improvement. It’s also one of few points of distinction between the 10 and 10+, aside from size.

The Note 10+ has four, count ’em, four rear-facing cameras. They are as follows:

- Ultra Wide: 16 megapixel

- Wide: 12 megapixel

- Telephoto: 12 megapixel

- DepthVision

That last one is only on the plus. It’s comprised of two little circles to the right of the primary camera array and just below the flash. We’ll get to that in a second.

The main camera array continues to be one of the best in mobile. The inclusion of telephoto and ultra-wide lenses allow for a wide range of different shots, and the hardware coupled with machine learning makes it a lot more difficult to take a bad photo (though believe me, it’s still possible).

[gallery ids="1869716,1869715,1869720,1869718,1869719"]

The live focus feature (Portrait mode, essentially) comes to video, with four different filters, including Color Point, which makes everything but the subject black and white.

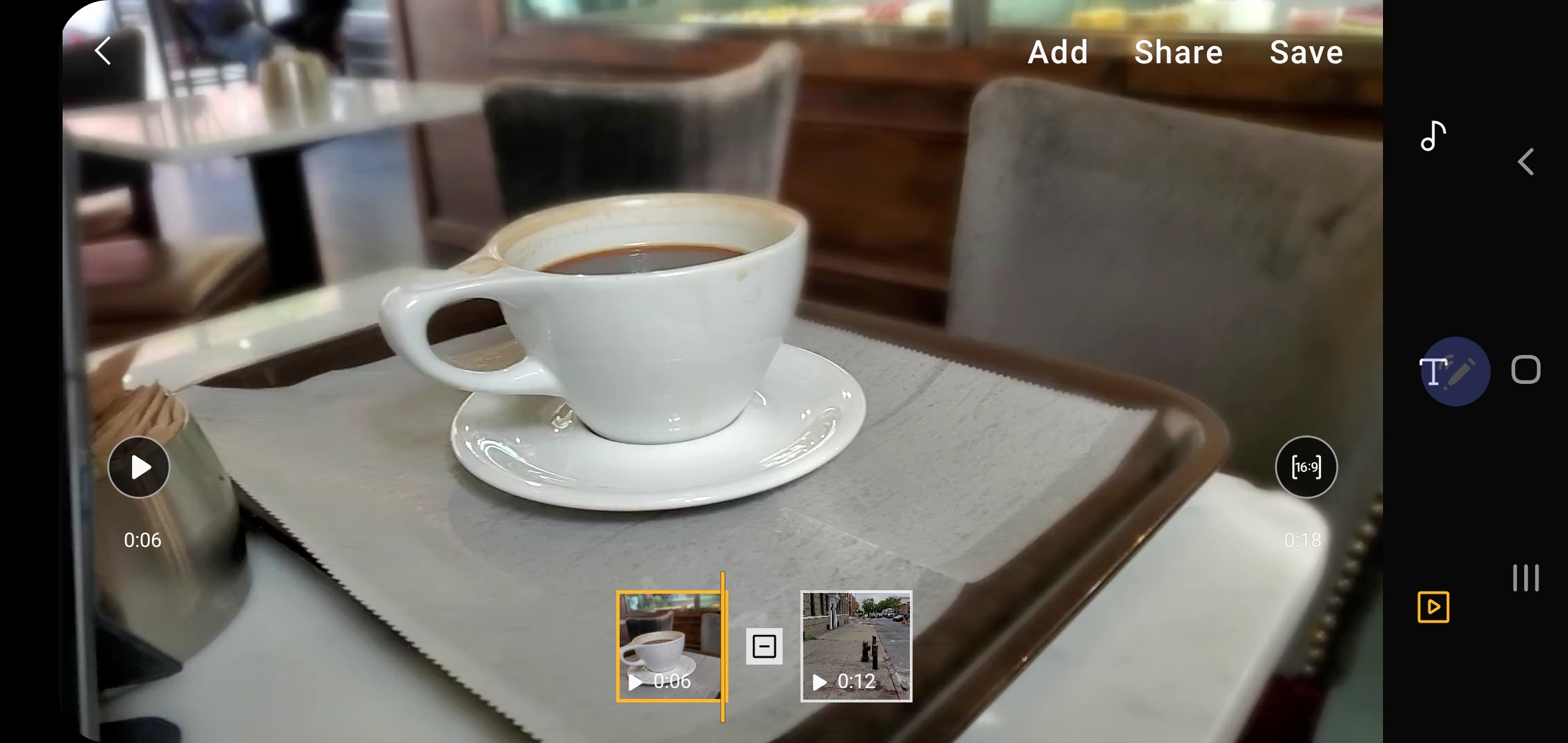

Samsung’s also brought a very simple video editor into the mix here, which is nice on the fly. You can edit the length of clips, splice in other clips, add subtitles and captions and add filters and music. It’s pretty beefy for something baked directly into the camera app, and one of the better uses I’ve found for the S Pen.

Note 10+ with Super Steady (left), iPhone XS (right)

Ditto for the improved Super Steady offering, which smooths out shaky video, including Hyperlapse mode, where handshakes are a big issue. It works well, but you do lose access to other features, including zoom. For that reason, it’s off by default and should be used relatively sparingly.

Note 10+ (left), iPhone XS (right)

Zoom-on Mic is a clever addition, as well. While shooting video, pinch-zooming on something will amplify the noise from that area. I’ve been playing around with it in this cafe. It’s interesting, but less than perfect.

[gallery ids="1869186,1869980,1869975,1869974,1869973,1869725,1869322,1869185,1869184,1869190"]

Zooming into something doesn’t exactly cancel out ambient noise from outside of the frame. Everything still gets amplified in the process and, like digital picture zoom, a lot of noise gets added in the process. Those hoping for a kind of spy microphone, I’m sorry/happy to report that this definitely is not that.

The DepthVision Camera is also pretty limited as I write this. If anything, it’s Samsung’s attempt to brace for a future when things like augmented reality will (theoretically) play a much larger role in our mobile computing. In a conversation I had with the company ahead of launch, they suggested that a lot of the camera’s AR functions will fall in the hands of developers.

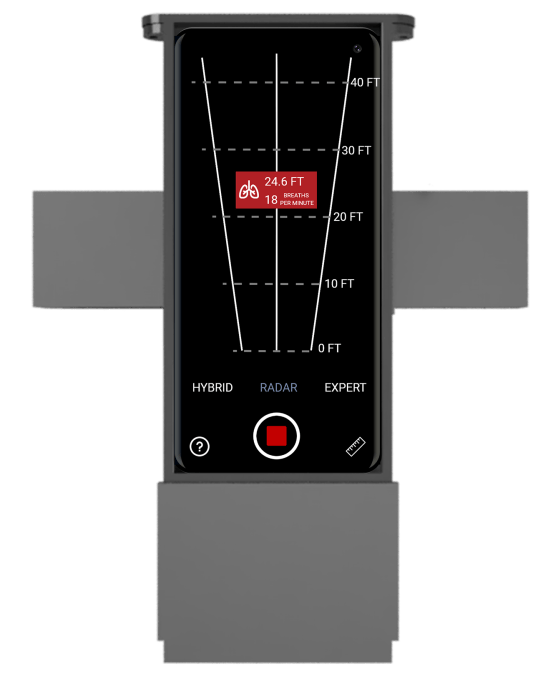

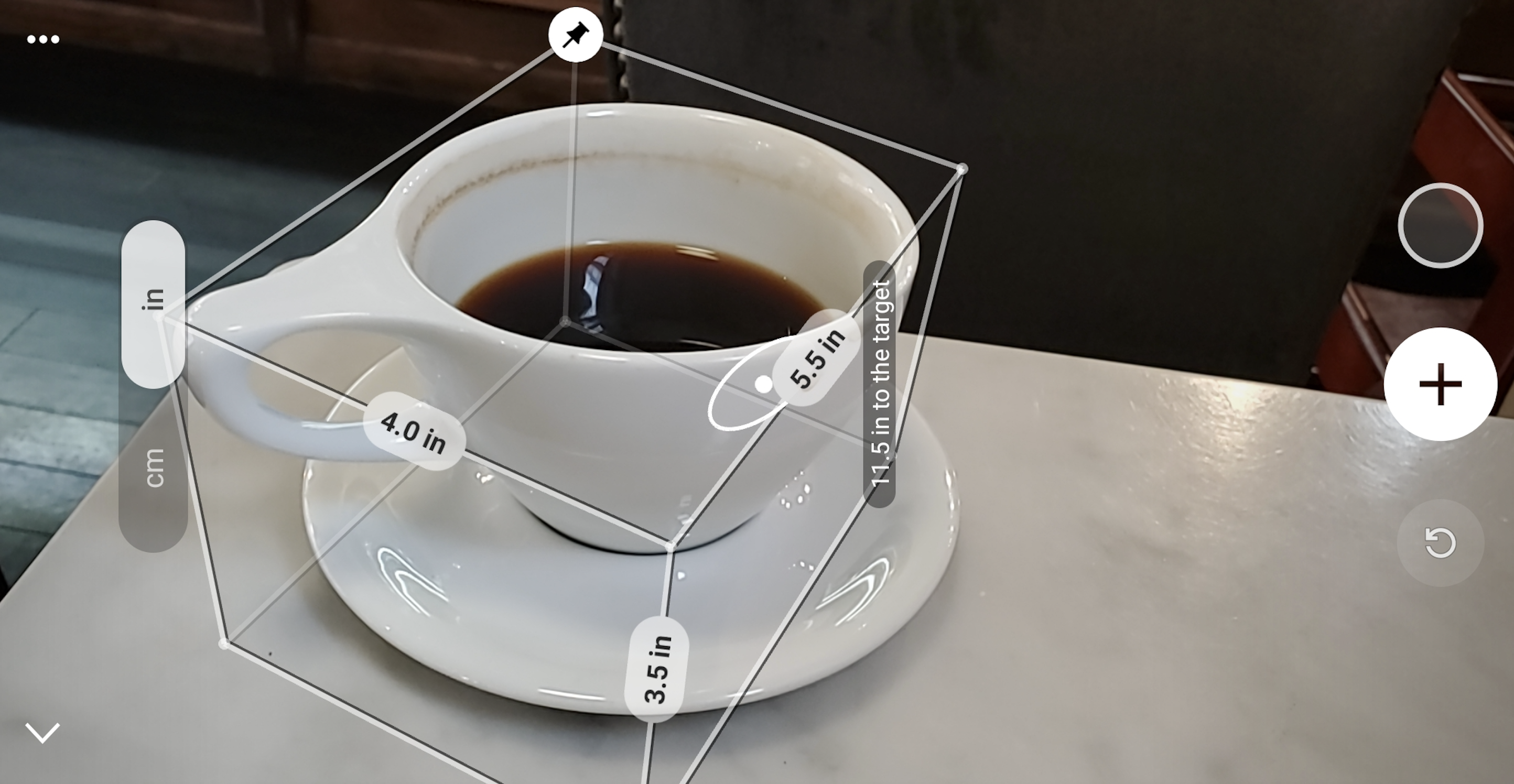

For now, Quick Measure is the one practical use. The app is a lot like Apple’s more simply titled Measure. Fire it up, move the camera around to get a lay of the land and it will measure nearby objects for you. An interesting showcase for AR potential? Sure. Earth shattering? Naw. It also seems to be a bit of a battery drain, sucking up the last few bits of juice as I was running it down.

3D Scanner, on the other hand, got by far the biggest applause line of the Note event. And, indeed, it’s impressive. In the stage demo, a Samsung employee scanned a stuffed pink beaver (I’m not making this up), created a 3D image and animated it using an associate’ movements. Practical? Not really. Cool? Definitely.

It was, however, not available at press time. Hopefully it proves to be more than vaporware, especially if that demo helped push some viewers over to the 10+. Without it, there’s just not a lot of use for the depth camera at the moment.

There’s also AR Doodle, which fills a similar spot as much of the company’s AR offerings. It’s kind of fun, but again, not particularly useful. You’ll likely end up playing with it for a few minutes and forget about it entirely. Such is life.

The feature is built into the camera app, using depth sensing to orient live drawings. With the stylus you can draw in space or doodle on people’s faces. It’s neat, the AR works okay and I was bored with it in about three minutes. Like Quick Measure, the feature is as much a proof of concept as anything. But that’s always been a part of Samsung’s kitchen-sink approach — some combination of useful and silly.

That said, points to Samsung for continuing to de-creepify AR Emojis. Those have moved firmly away from the uncanny valley into something more cartoony/adorable. Less ironic usage will surely follow.

Asked about the key differences between the S and Note lines, Samsung’s response was simple: the S Pen. Otherwise, the lines are relatively interchangeable.

Samsung’s return of the stylus didn’t catch on for handsets quite like the phablet form factor. They’ve made a pretty significant comeback for tablets, but the Note remains fairly singular when it comes to the S Pen. I’ve never been a big user myself, but those who like it swear by it. It’s one of those things like the ThinkPad pointing stick or BlackBerry scroll wheel.

Like the phone itself, the peripheral has been streamlined with a unibody design. Samsung also continues to add capabilities. It can be used to control music, advance slideshows and snap photos. None of that is likely to convince S Pen skeptics (I prefer using the buttons on the included headphones for music control, for example), but more versatility is generally a good thing.

If anything is going to convince people to pick up the S Pen this time out, it’s the improved handwriting recognition. That’s pretty impressive. It was even able to decipher my awful chicken scratch.

You get the same sort of bleeding-edge specs here you’ve come to expect from Samsung’s flagships. The 10+ gets you a baseline 256GB of storage (upgradable to 512), coupled with a beefy 12GB of RAM (the regular Note is a still good 8GB/256GB). The 5G version sports the same numbers and battery (likely making its total life a bit shorter per charge). That’s a shift from the S10, whose 5G version was specced out like crazy. Likely Samsung is bracing for 5G to become less of a novelty in the next year or so.

The new Note also benefits from other recent additions, like the in-display fingerprint reader and wireless power sharing. Both are nice additions, but neither is likely enough to warrant an immediate upgrade.

Once again, that’s not an indictment of Samsung, so much as a reflection of where we are in the life cycle of a mature smartphone industry. The Note 10+ is another good addition to one of the leading smartphone lines. It succeeds as both a productivity device (thanks to additions like DeX and added cross-platform functionality with Windows 10) and an everyday handset.

There’s not enough on-board to really recommend an upgrade from the Note 8 or 9 — especially at that $1,099 price. People are holding onto their devices for longer, and for good reason (as detailed above). But if you need a new phone, are looking for something big and flashy and are willing to splurge, the Note continues to be the one to beat.

[gallery ids="1869169,1869168,1869167,1869166,1869165,1869164,1869163,1869162,1869161,1869160,1869159,1869158,1869157,1869156,1869155,1869154,1869153,1869152"]

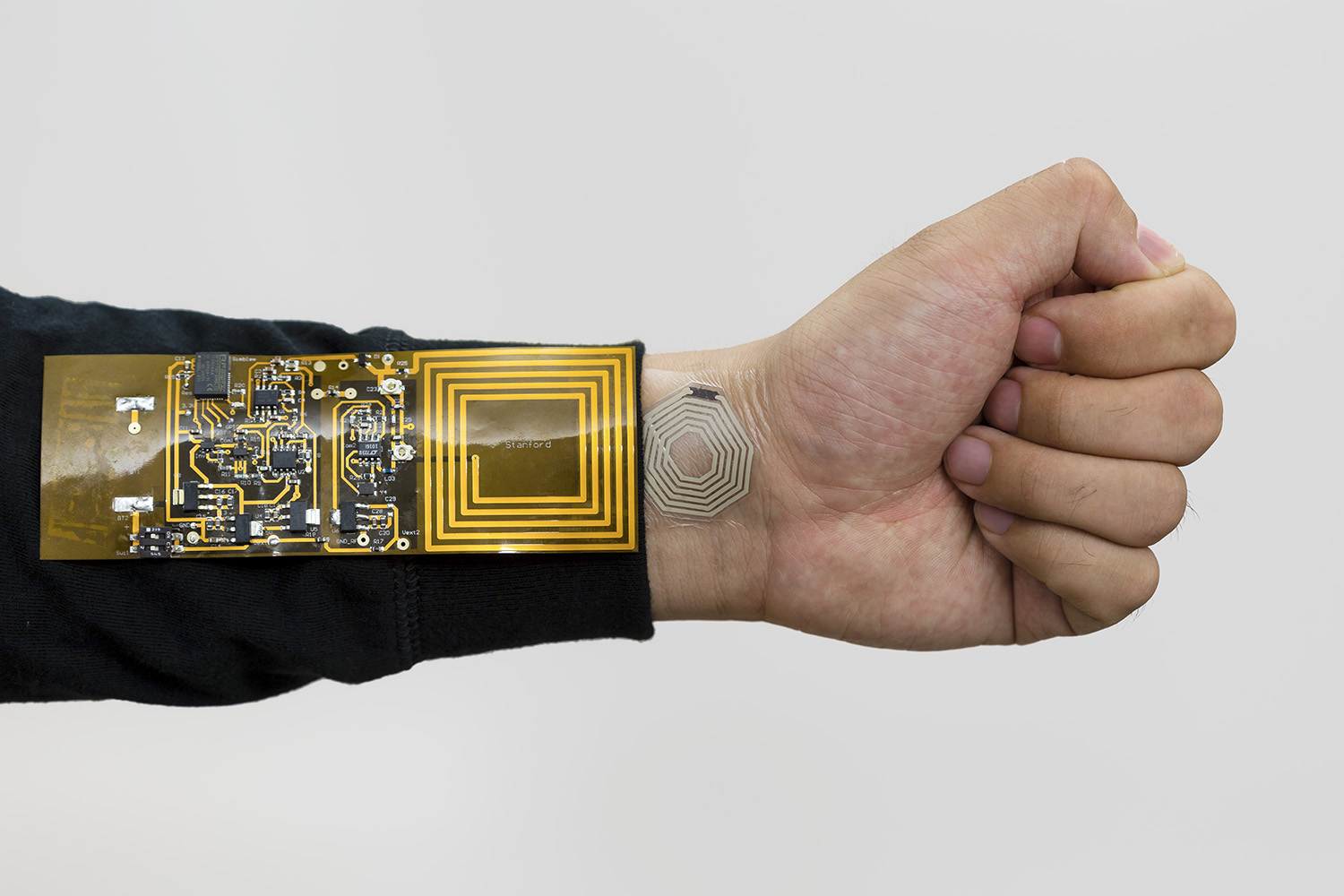

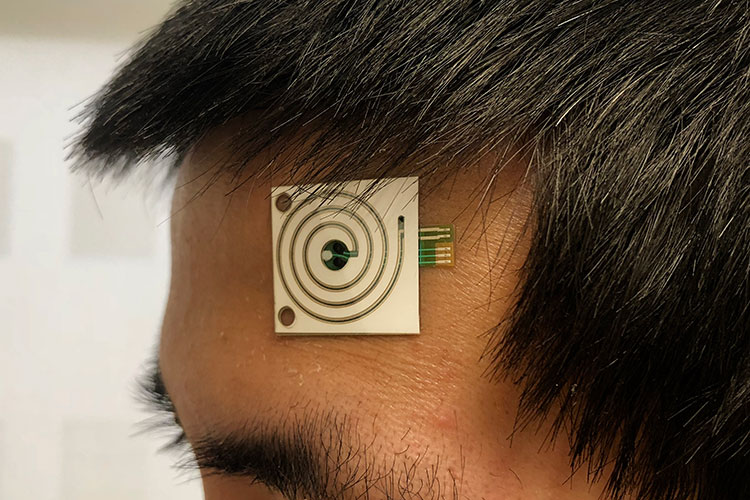

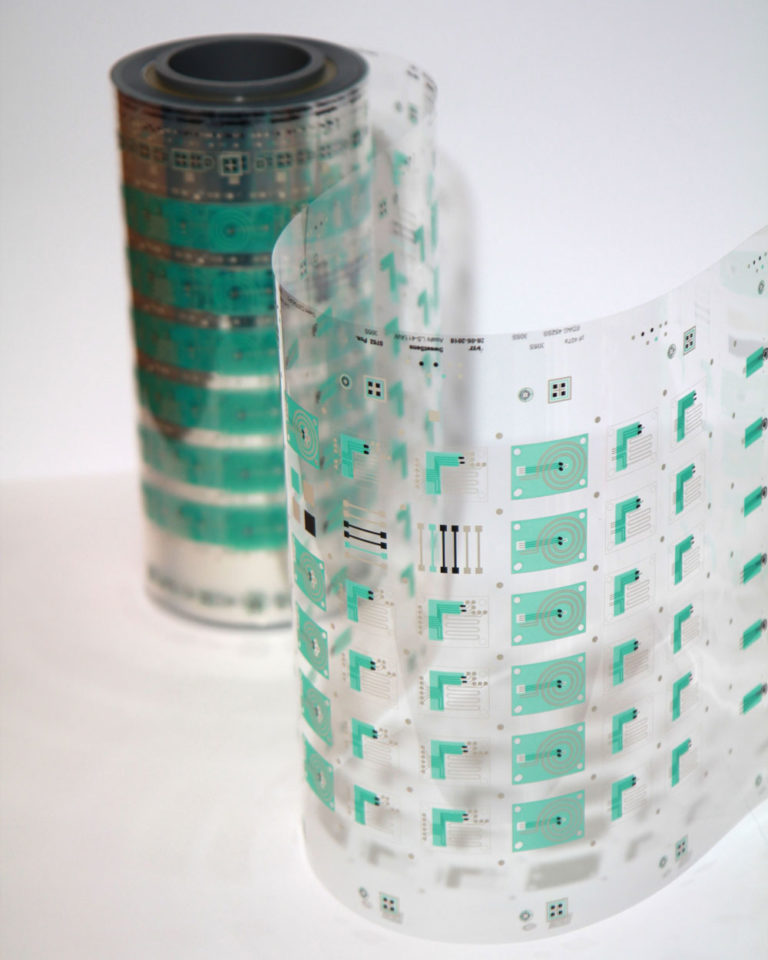

“The goal of the project is not just to make the sensors but start to do many subject studies and see what sweat tells us — I always say ‘decoding’ sweat composition. For that we need sensors that are reliable, reproducible, and that we can fabricate to scale so that we can put multiple sensors in different spots of the body and put them on many subjects,” explained Ali Javey, Berkeley professor and head of the project.

“The goal of the project is not just to make the sensors but start to do many subject studies and see what sweat tells us — I always say ‘decoding’ sweat composition. For that we need sensors that are reliable, reproducible, and that we can fabricate to scale so that we can put multiple sensors in different spots of the body and put them on many subjects,” explained Ali Javey, Berkeley professor and head of the project.