How safe are your secrets? If you used Amazon’s Elastic Block Storage, you might want to check your settings.

New research just presented at the Def Con security conference reveals how companies, startups, and governments are inadvertently leaking their own files from the cloud.

You may have heard of exposed S3 buckets — those Amazon-hosted storage servers packed with customer data but are often misconfigured and inadvertently set to “public” for anyone to access. But you may not have heard about exposed EBS volumes, which poses as much if not a greater risk.

These elastic block storage (EBS) volumes are the “keys to the kingdom,” said Ben Morris, a senior security analyst at cybersecurity firm Bishop Fox, in a call with TechCrunch ahead of his Def Con talk. EBS volumes store all the data for cloud applications. “They have the secret keys to your applications and they have database access to your customers’ information,” he said.

“When you get rid of the hard disk for your computer, you know, you usually shredded or wipe it completely,” he said. “But these public EBS volumes are just left for anyone to take and start poking at.”

He said that all too often cloud admins don’t choose the correct configuration settings, leaving EBS volumes inadvertently public and unencrypted. “That means anyone on the internet can download your hard disk and boot it up, attach it to a machine they control, and then start rifling through the disk to look for any kind of secrets,” he said.

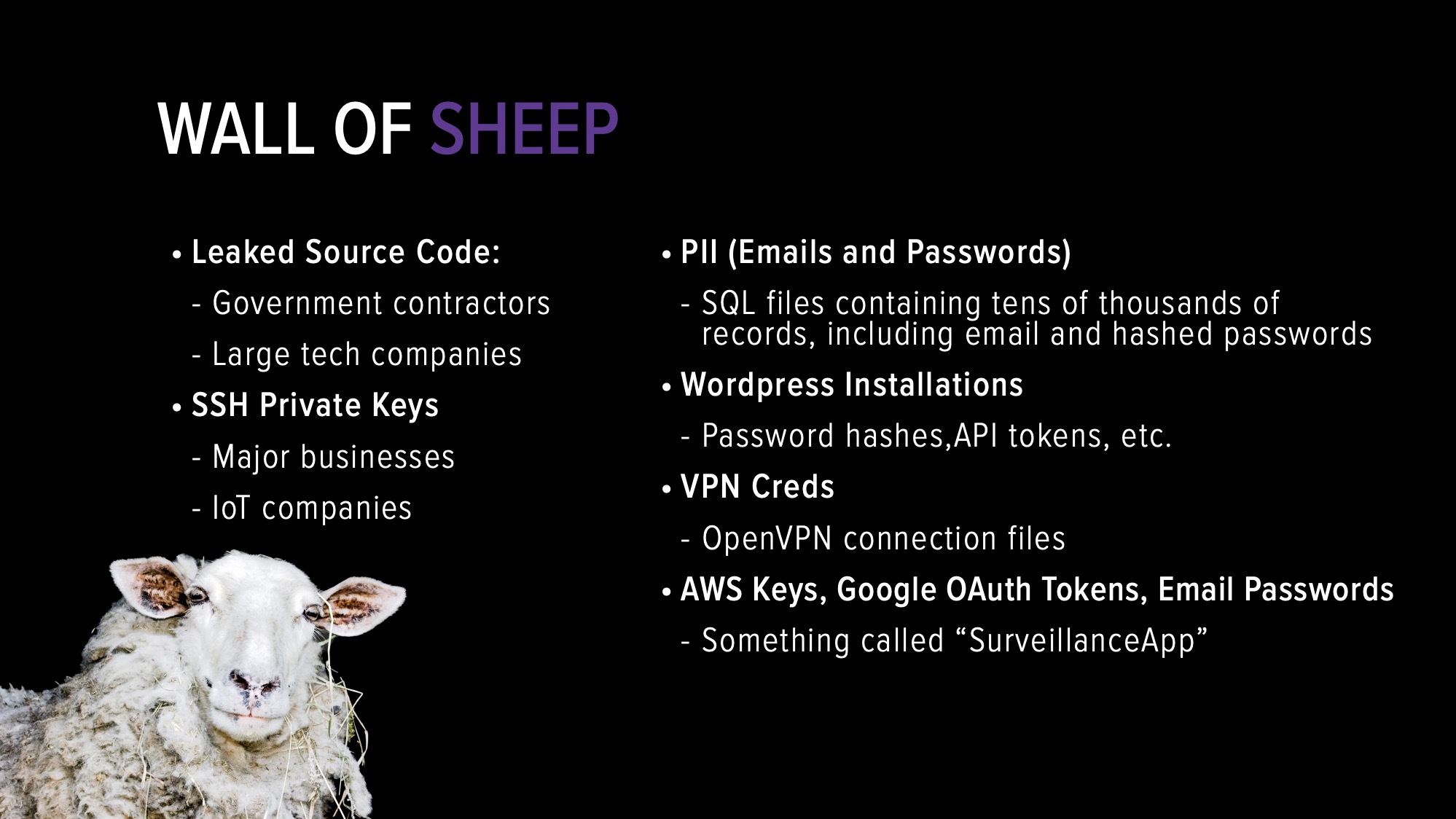

One of Morris’ Def Con slides noting the types of compromised data found using his research, often known as the “Wall of Sheep.” (Image: Ben Morris/Bishop Fox; supplied)

Morris built a tool using Amazon’s own internal volume search feature to query and scrape publicly exposed EBS volumes, then attach it, make a copy and list the contents of the volume on his system.

“If you expose the disk for even just a couple of minutes, our system will pick it up and make it copy of it,” he said.

It took him two months to build up a database of exposed volumes and just a few hundred dollars spent on Amazon cloud resources. Once he validates each volume, he deletes the data.

Morris found dozens of volumes exposed publicly in one region alone, he said, including application keys, critical user or administrative credentials, source code, and more. He found several major companies, including healthcare providers and tech companies.

He also found VPN configurations, which he said could allow him to tunnel into a corporate network. Morris said he did not use any credentials or sensitive data as it would be unlawful.

Among the most damaging things he found, Morris said he found a volume for one government contractor, which he did not name, but provided data storage services to federal agencies. “On their website, they brag about holding this data,” he said, referring to collected intelligence from messages sent to and from the so-called Islamic State terror group to data on border crossings.

“Those are the kind of things I would definitely not want some to be exposed to the public Internet,” he said.

He estimates the figure could be as many as 1,250 exposures across all Amazon cloud regions.

Morris plans to release his proof-of-concept code in the coming weeks.

“I’m giving companies a couple of weeks to go through their own disks and make sure that they don’t have any accidental exposures,” he said.