New research into how European consumers interact with the cookie consent mechanisms which have proliferated since a major update to the bloc’s online privacy rules last year casts an unflattering light on widespread manipulation of a system that’s supposed to protect consumer rights.

As Europe’s General Data Protection Regulation (GDPR) came into force in May 2018, bringing in a tough new regime of fines for non-compliance, websites responded by popping up legal disclaimers which signpost visitor tracking activities. Some of these cookie notices even ask for consent to track you.

But many don’t — even now, more than a year later.

The study, which looked at how consumers interact with different designs of cookie pop-ups and how various design choices can nudge and influence people’s privacy choices, also suggests consumers are suffering a degree of confusion about how cookies function, as well as being generally mistrustful of the term ‘cookie’ itself. (With such baked in tricks, who can blame them?)

The researchers conclude that if consent to drop cookies was being collected in a way that’s compliant with the EU’s existing privacy laws only a tiny fraction of consumers would agree to be tracked.

The paper, which we’ve reviewed in draft ahead of publication, is co-authored by academics at Ruhr-University Bochum, Germany, and the University of Michigan in the US — and entitled: (Un)informed Consent: Studying GDPR Consent Notices in the Field.

The researchers ran a number of studies, gathering ~5,000 of cookie notices from screengrabs of leading websites to compile a snapshot (derived from a random sub-sample of 1,000) of the different cookie consent mechanisms in play in order to paint a picture of current implementations.

They also worked with a German ecommerce website over a period of four months to study how more than 82,000 unique visitors to the site interacted with various cookie consent designs which the researchers’ tweaked in order to explore how different defaults and design choices affected individuals’ privacy choices.

Their industry snapshot of cookie consent notices found that the majority are placed at the bottom of the screen (58%); not blocking the interaction with the website (93%); and offering no options other than a confirmation button that does not do anything (86%). So no choice at all then.

A majority also try to nudge users towards consenting (57%) — such as by using ‘dark pattern’ techniques like using a color to highlight the ‘agree’ button (which if clicked accepts privacy-unfriendly defaults) vs displaying a much less visible link to ‘more options’ so that pro-privacy choices are buried off screen.

And while they found that nearly all cookie notices (92%) contained a link to the site’s privacy policy, only a third (39%) mention the specific purpose of the data collection or who can access the data (21%).

The GDPR updated the EU’s long-standing digital privacy framework, with key additions including tightening the rules around consent as a legal basis for processing people’s data — which the regulation says must be specific (purpose limited), informed and freely given for consent to be valid.

Even so, since May last year there has been an outgrown in cookie ‘consent’ mechanisms popping up or sliding atop websites that still don’t offer EU visitors the necessary privacy choices, per the research.

“Given the legal requirements for explicit, informed consent, it is obvious that the vast majority of cookie consent notices are not compliant with European privacy law,” the researchers argue.

“Our results show that a reasonable amount of users are willing to engage with consent notices, especially those who want to opt out or do not want to opt in. Unfortunately, current implementations do not respect this and the large majority offers no meaningful choice.”

The researchers also record a large differential in interaction rates with consent notices — of between 5 and 55% — generated by tweaking positions, options, and presets on cookie notices.

This is where consent gets manipulated — to flip visitors’ preference for privacy.

They found that the more choices offered in a cookie notice, the more likely visitors were to decline the use of cookies. (Which is an interesting finding in light of the vendor laundry lists frequently baked into the so-called “transparency and consent framework” which the industry association, the Internet Advertising Bureau (IAB), has pushed as the standard for its members to use to gather GDPR consents.)

“The results show that nudges and pre-selection had a high impact on user decisions, confirming previous work,” the researchers write. “It also shows that the GDPR requirement of privacy by default should be enforced to make sure that consent notices collect explicit consent.”

Here’s a section from the paper discussing what they describe as “the strong impact of nudges and pre-selections”:

Overall the effect size between nudging (as a binary factor) and choice was CV=0.50. For example, in the rather simple case of notices that only asked users to confirm that they will be tracked, more users clicked the “Accept” button in the nudge condition, where it was highlighted (50.8% on mobile, 26.9% on desktop), than in the non-nudging condition where “Accept” was displayed as a text link (39.2% m, 21.1% d). The effect was most visible for the category-and vendor-based notices, where all checkboxes were pre-selected in the nudging condition, while they were not in the privacy-by-default version. On the one hand, the pre-selected versions led around 30% of mobile users and 10% of desktop users to accept all third parties. On the other hand, only a small fraction (< 0.1%) allowed all third parties when given the opt-in choice and around 1 to 4 percent allowed one or more third parties (labeled “other” in 4). None of the visitors with a desktop allowed all categories. Interestingly, the number of non-interacting users was highest on average for the vendor-based condition, although it took up the largest part of any screen since it offered six options to choose from.

The key implication is that just 0.1% of site visitors would freely choose to enable all cookie categories/vendors — i.e. when not being forced to do so by a lack of choice or via nudging with manipulative dark patterns (such as pre-selections).

Rising a fraction, to between 1-4%, who would enable some cookie categories in the same privacy-by-default scenario.

“Our results… indicate that the privacy-by-default and purposed-based consent requirements put forth by the GDPR would require websites to use consent notices that would actually lead to less than 0.1 % of active consent for the use of third parties,” they write in conclusion.

They do flag some limitations with the study, pointing out that the dataset they used that arrived at the 0.1% figure is biased — given the nationality of visitors is not generally representative of public Internet users, as well as the data being generated from a single retail site. But they supplemented their findings with data from a company (Cookiebot) which provides cookie notices as a SaaS — saying its data indicated a higher accept all clicks rate but still only marginally higher: Just 5.6%.

Hence the conclusion that if European web users were given an honest and genuine choice over whether or not they get tracked around the Internet, the overwhelming majority would choose to protect their privacy by rejecting tracking cookies.

This is an important finding because GDPR is unambiguous in stating that if an Internet service is relying on consent as a legal basis to process visitors’ personal data it must obtain consent before processing data (so before a tracking cookie is dropped) — and that consent must be specific, informed and freely given.

Yet, as the study confirms, it really doesn’t take much clicking around the regional Internet to find a gaslighting cookie notice that pops up with a mocking message saying by using this website you’re consenting to your data being processed how the site sees fit — with just a single ‘Ok’ button to affirm your lack of say in the matter.

It’s also all too common to see sites that nudge visitors towards a big brightly colored ‘click here’ button to accept data processing — squirrelling any opt outs into complex sub-menus that can sometimes require hundreds of individual clicks to deny consent per vendor.

You can even find websites that gate their content entirely unless or until a user clicks ‘accept’ — aka a cookie wall. (A practice that has recently attracted regulatory intervention.)

Nor can the current mess of cookie notices be blamed on a lack of specific guidance on what a valid and therefore legal cookie consent looks like. At least not any more. Here, for example, is a myth-busting blog which the UK’s Information Commissioner’s Office (ICO) published last month that’s pretty clear on what can and can’t be done with cookies.

For instance on cookie walls the ICO writes: “Using a blanket approach such as this is unlikely to represent valid consent. Statements such as ‘by continuing to use this website you are agreeing to cookies’ is not valid consent under the higher GDPR standard.” (The regulator goes into more detailed advice here.)

While France’s data watchdog, the CNIL, also published its own detailed guidance last month — if you prefer to digest cookie guidance in the language of love and diplomacy.

(Those of you reading TechCrunch back in January 2018 may also remember this sage plain english advice from our GDPR explainer: “Consent requirements for processing personal data are also considerably strengthened under GDPR — meaning lengthy, inscrutable, pre-ticked T&Cs are likely to be unworkable.” So don’t say we didn’t warn you.)

Nor are Europe’s data protection watchdogs lacking in complaints about improper applications of ‘consent’ to justify processing people’s data.

Indeed, ‘forced consent’ was the substance of a series of linked complaints by the pro-privacy NGO noyb, which targeted T&Cs used by Facebook, WhatsApp, Instagram and Google Android immediately GDPR started being applied in May last year.

While not cookie notice specific, this set of complaints speaks to the same underlying principle — i.e. that EU users must be provided with a specific, informed and free choice when asked to consent to their data being processed. Otherwise the ‘consent’ isn’t valid.

So far Google is the only company to be hit with a penalty as a result of that first wave of consent-related GDPR complaints; France’s data watchdog issued it a $57M fine in January.

But the Irish DPC confirmed to us that three of the 11 open investigations it has into Facebook and its subsidiaries were opened after noyb’s consent-related complaints. (“Each of these investigations are at an advanced stage and we can’t comment any further as these investigations are ongoing,” a spokeswoman told us. So, er, watch that space.)

The problem, where EU cookie consent compliance is concerned, looks to be both a failure of enforcement and a lack of regulatory alignment — the latter as a consequence of the ePrivacy Directive (which most directly concerns cookies) still not being updated, generating confusion (if not outright conflict) with the shiny new GDPR.

However the ICO’s advice on cookies directly addresses claimed inconsistencies between ePrivacy and GDPR, stating plainly that Recital 25 of the former (which states: “Access to specific website content may be made conditional on the well-informed acceptance of a cookie or similar device, if it is used for a legitimate purpose”) does not, in fact, sanction gating your entire website behind an ‘accept or leave’ cookie wall.

Here’s what the ICO says on Recital 25 of the ePrivacy Directive:

- ‘specific website content’ means that you should not make ‘general access’ subject to conditions requiring users to accept non-essential cookies – you can only limit certain content if the user does not consent;

- the term ‘legitimate purpose’ refers to facilitating the provision of an information society service – ie, a service the user explicitly requests. This does not include third parties such as analytics services or online advertising;

So no cookie wall; and no partial walls that force a user to agree to ad targeting in order to access the content.

It’s worth point out that other types of privacy-friendly online advertising are available with which to monetize visits to a website. (And research suggests targeted ads offer only a tiny premium over non-targeted ads, even as publishers choosing a privacy-hostile ads path must now factor in the costs of data protection compliance to their calculations — as well as the cost and risk of massive GDPR fines if their security fails or they’re found to have violated the law.)

Negotiations to replace the now very long-in-the-tooth ePrivacy Directive — with an up-to-date ePrivacy Regulation which properly takes account of the proliferation of Internet messaging and all the ad tracking techs that have sprung up in the interim — are the subject of very intense lobbying, including from the adtech industry desperate to keep a hold of cookie data. But EU privacy law is clear.

“[Cookie consent]’s definitely broken (and has been for a while). But the GDPR is only partly to blame, it was not intended to fix this specific problem. The uncertainty of the current situation is caused the delay of the ePrivacy regulation that was put on hold (thanks to lobbying),” says Martin Degeling, one of the research paper’s co-authors, when we suggest European Internet users are being subject to a lot of ‘consent theatre’ (ie noisy yet non-compliant cookie notices) — which in turn is causing knock-on problems of consumer mistrust and consent fatigue for all these useless pop-ups. Which work against the core aims of the EU’s data protection framework.

“Consent fatigue and mistrust is definitely a problem,” he agrees. “Users that have experienced that clicking ‘decline’ will likely prevent them from using a site are likely to click ‘accept’ on any other site just because of one bad experience and regardless of what they actually want (which is in most cases: not be tracked).”

“We don’t have strong statistical evidence for that but users reported this in the survey,” he adds, citing a poll the researchers also ran asking site visitors about their privacy choices and general views on cookies.

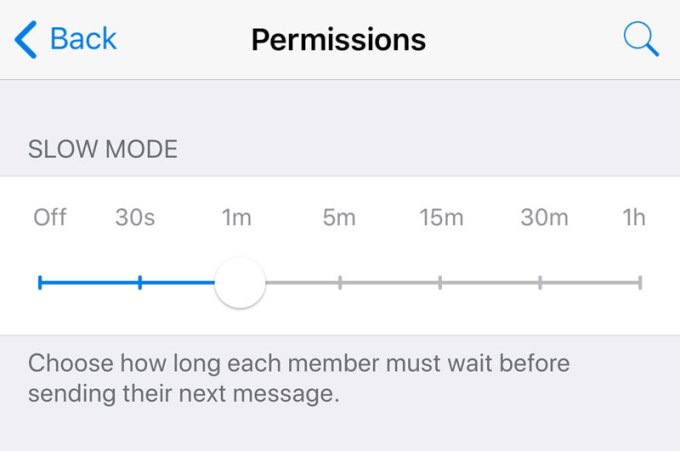

Degeling says he and his co-authors are in favor of a consent mechanism that would enable web users to specify their choice at a browser level — rather than the current mess and chaos of perpetual, confusing and often non-compliant per site pop-ups. Although he points out some caveats.

“DNT [Do Not Track] is probably also not GDPR compliant as it only knows one purpose. Nevertheless something similar would be great,” he tells us. “But I’m not sure if shifting the responsibility to browser vendors to design an interface through which they can obtain consent will lead to the best results for users — the interfaces that we see now, e.g. with regard to cookies, are not a good solution either.

“And the conflict of interest for Google with Chrome are obvious.”

The EU’s unfortunate regulatory snafu around privacy — in that it now has one modernized, world-class privacy regulation butting up against an outdated directive (whose progress keeps being blocked by vested interests intent on being able to continue steamrollering consumer privacy) — likely goes some way to explaining why Member States’ data watchdogs have generally been loath, so far, to show their teeth where the specific issue of cookie consent is concerned.

At least for an initial period the hope among data protection agencies (DPAs) was likely that ePrivacy would be updated and so they should wait and see.

They have also undoubtedly been providing data processors with time to get their data houses and cookie consents in order. But the frictionless interregnum while GDPR was allowed to ‘bed in’ looks unlikely to last much longer.

Firstly because a law that’s not enforced isn’t worth the paper it’s written on (and EU fundamental rights are a lot older than the GDPR). Secondly, with the ePrivacy update still blocked DPAs have demonstrated they’re not just going to sit on their hands and watch privacy rights be rolled back — hence them putting out guidance that clarifies what GDPR means for cookies. They’re drawing lines in the sand, rather than waiting for ePrivacy to do it (which also guards against the latter being used by lobbyists as a vehicle to try to attack and water down GDPR).

And, thirdly, Europe’s political institutions and policymakers have been dining out on the geopolitical attention their shiny privacy framework (GDPR) has attained.

Much has been made at the highest levels in Europe of being able to point to US counterparts, caught on the hop by ongoing tech privacy and security scandals, while EU policymakers savor the schadenfreude of seeing their US counterparts being forced to ask publicly whether it’s time for America to have its own GDPR.

With its extraterritorial scope, GDPR was always intended to stamp Europe’s rule-making prowess on the global map. EU lawmakers will feel they can comfortably check that box.

However they are also aware the world is watching closely and critically — which makes enforcement a very key piece. It must slot in too. They need the GDPR to work on paper and be seen to be working in practice.

So the current cookie mess is a problematic signal which risks signposting regulatory failure — and that simply isn’t sustainable.

A spokesperson for the European Commission told us it cannot comment on specific research but said: “The protection of personal data is a fundamental right in the European Union and a topic the Juncker commission takes very seriously.”

“The GDPR strengthens the rights of individuals to be in control of the processing of personal data, it reinforces the transparency requirements in particular on the information that is crucial for the individual to make a choice, so that consent is given freely, specific and informed,” the spokesperson added.

“Cookies, insofar as they are used to identify users, qualify as personal data and are therefore subject to the GDPR. Companies do have a right to process their users’ data as long as they receive consent or if they have a legitimate interest.”

All of which suggests that the movement, when it comes, must come from a reforming adtech industry.

With robust privacy regulation in place the writing is now on the wall for unfettered tracking of Internet users for the kind of high velocity, real-time trading of people’s eyeballs that the ad industry engineered for itself when no one knew what was being done with people’s data.

GDPR has already brought greater transparency. Once Europeans are no longer forced to trade away their privacy it’s clear they’ll vote with their clicks not to be ad-stalked around the Internet too.

The current chaos of non-compliant cookie notices is thus a signpost pointing at an underlying privacy lag — and likely also the last gasp signage of digital business models well past their sell-by-date.