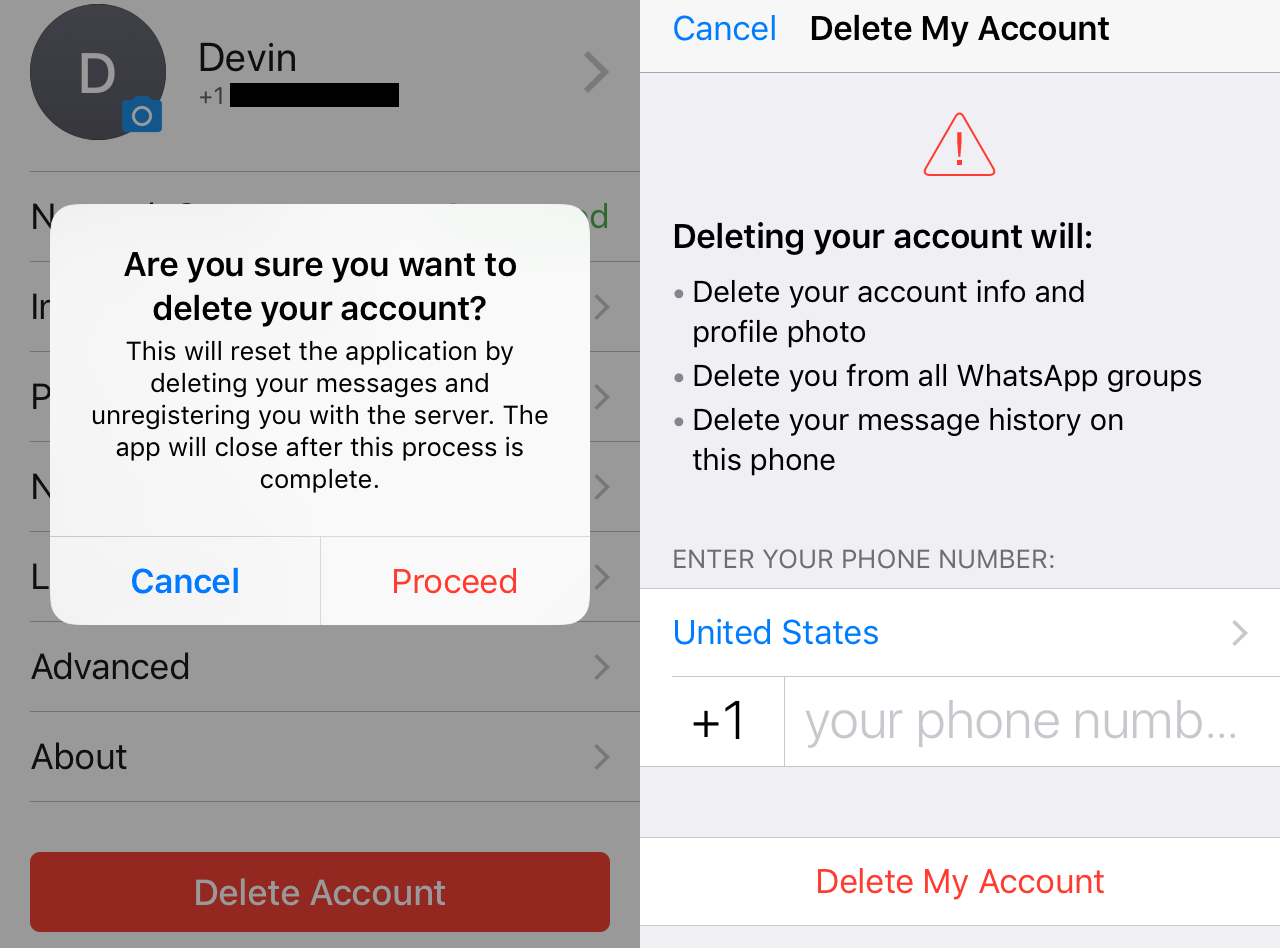

Facebook obtained personal and sensitive device data on about 187,000 users of its now-defunct Research app, which Apple banned earlier this year after the app violated its rules.

The social media giant said in a letter to lawmakers — which TechCrunch obtained — that it collected data on 31,000 users in the U.S., including 4,300 teenagers. The rest of the collected data came from users in India.

Earlier this year, a TechCrunch investigation found both Facebook and Google were abusing their Apple-issued enterprise developer certificates, designed to only allow employees to run iPhone and iPad apps used only inside the company. The investigation found the companies were building and providing apps for consumers outside Apple’s App Store, in violation of Apple’s rules. The apps paid users in return for collecting data on how participants used their devices and understand app habits by gaining access to all of the network data in and out of their device.

Apple banned the apps by revoking Facebook’s enterprise developer certificate — and later Google’s enterprise certificate. In doing so, the revocation knocked both companies’ fleet of internal iPhone or iPad app offline that relied on the same certificates.

But in response to lawmakers’ questions, Apple said it didn’t know how many devices installed Facebook’s rule-violating app.

“We know that the provisioning profile for the Facebook Research app was created on April 19, 2017, but this does not necessarily correlate to the date that Facebook distributed the provisioning profile to end users,” said Timothy Powderly, Apple’s director of federal affairs, in his letter.

Facebook said the app dated back to 2016.

TechCrunch also obtained the letters sent by Apple and Google to lawmakers in early March, but were never made public.

These “research” apps relied on willing participants to download the app from outside the app store and use the Apple-issued developer certificates to install the apps. Then, the apps would install a root network certificate, allowing the app to collect all the data out of the device — like web browsing histories, encrypted messages, and mobile app activity — potentially also including data from their friends — for competitive analysis.

A response by Facebook about the number of users involved in Project Atlas. (Image: TechCrunch)

In Facebook’s case, the research app — dubbed Project Atlas — was a repackaged version of its Onavo VPN app, which Facebook was forced to remove from Apple’s App Store last year for gathering too much device data.

Just this week, Facebook relaunched its research app as Study, only available on Google Play and for users who have been approved through Facebook’s research partner, Applause. Facebook said it would be more transparent about how it collects user data.

Facebook’s vice-president of public policy Kevin Martin defended the company’s use of enterprise certificates, saying it “was a relatively well-known industry practice.” When asked, a Facebook spokesperson didn’t quantify this further. Later, TechCrunch found dozens of apps that used enterprise certificates to evade the app store.

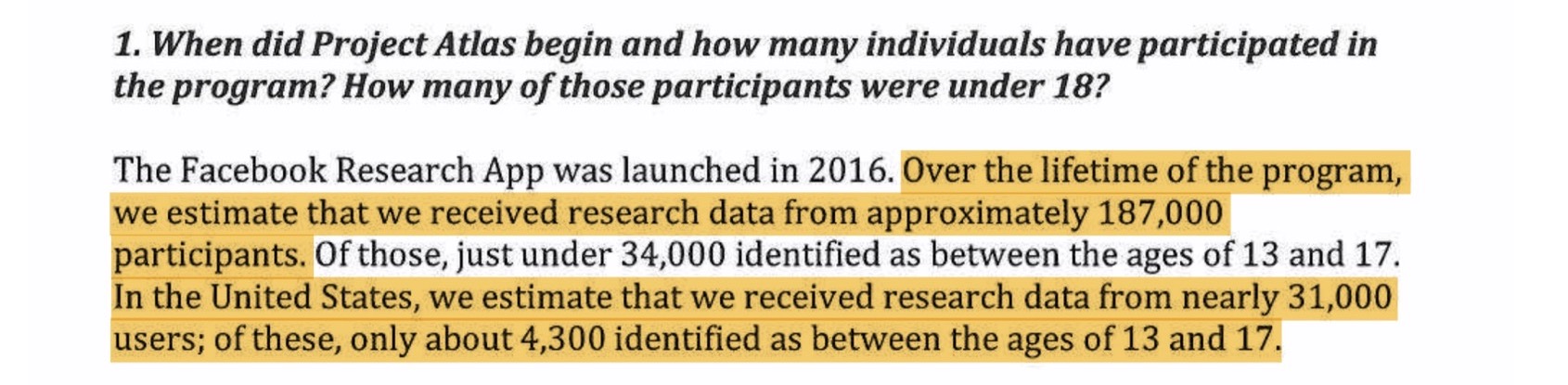

Facebook previously said it “specifically ignores information shared via financial or health apps.” In its letter to lawmakers, Facebook stuck to its guns, saying its data collection was focused on “analytics,” but confirmed “in some isolated circumstances the app received some limited non-targeted content.”

“We did not review all of the data to determine whether it contained health or financial data,” said a Facebook spokesperson. “We have deleted all user-level market insights data that was collected from the Facebook Research app, which would include any health or financial data that may have existed.”

But Facebook didn’t say what kind of data, only that the app didn’t decrypt “the vast majority” of data sent by a device.

Facebook describing the type of data it collected — including “limited, non-targeted content.” (Image: TechCrunch)

Google’s letter, penned by public policy vice-president Karan Bhatia, did not provide a number of devices or users, saying only that its app was a “small scale” program. When reached, a Google spokesperson did not comment by our deadline.

Google also said it found “no other apps that were distributed to consumer end users,” but confirmed several other apps used by the company’s partners and contractors, which no longer rely on enterprise certificates.

Google explaining which of its apps were improperly using Apple-issued enterprise certificates. (Image: TechCrunch)

Apple told TechCrunch that both Facebook and Google “are in compliance” with its rules as of the time of publication. At its annual developer conference last week, the company said it now “reserves the right to review and approve or reject any internal use application.”

Facebook’s willingness to collect this data from teenagers — despite constant scrutiny from press and regulators — demonstrates how valuable the company sees market research on its competitors. With its restarted paid research program but with greater transparency, the company continues to leverage its data collection to keep ahead of its rivals.

Facebook and Google came off worse in the enterprise app abuse scandal, but critics said in revoking enterprise certificates Apple retains too much control over what content customers have on their devices.

The Justice Department and the Federal Trade Commission are said to be examining the big four tech giants — Apple, Amazon, Facebook, and Google-owner Alphabet — for potentially falling foul of U.S. antitrust laws.