Mark Zuckerberg: “The future is private”. Sundar Pichai: ~The present is private~. While both CEO’s made protecting user data a central theme of their conference keynotes this month, Facebook’s product updates were mostly vague vaporware while Google’s were either ready to ship or ready to demo. The contrast highlights the divergence in strategy between the two tech giants.

For Facebook, privacy is a talking point meant to boost confidence in sharing, deter regulators, and repair its battered image. For Google, privacy is functional, going hand-in-hand with on-device data processing to make features faster and more widely accessible.

Everyone wants tech to be more private, but we must discern between promises and delivery. Like “mobile”, “on-demand”, “AI”, and “blockchain” before it, “privacy” can’t be taken at face value. We deserve improvements to the core of how our software and hardware work, not cosmetic add-ons and instantiations no one is asking for.

AMY OSBORNE/AFP/Getty Images

At Facebook’s F8 last week, we heard from Zuckerberg about how “Privacy gives us the freedom to be ourselves” and he reiterated how that would happen through ephemerality and secure data storage. He said Messenger and Instagram Direct will become encrypted…eventually…which Zuckerberg had already announced in January and detailed in March. We didn’t get the Clear History feature that Zuckerberg made the privacy centerpiece of his 2018 conference, or anything about the Data Transfer Project that’s been silent for the 10 months since it’s reveal.

What users did get was a clumsy joke from Zuckerberg about how “I get that a lot of people aren’t sure that we’re serious about this. I know that we don’t exactly have the strongest reputation on privacy right now to put it lightly. But I’m committed to doing this well.” No one laughed. At least he admitted that “It’s not going to happen overnight.”

But it shouldn’t have to. Facebook made its first massive privacy mistake in 2007 with Beacon, which quietly relayed your off-site ecommerce and web activity to your friends. It’s had 12 years, a deal with the FTC promising to improve, countless screwups and apologies, the democracy-shaking Cambridge Analytica scandal, and hours of being grilled by congress to get serious about the problem. That makes it clear that if “the future is private”, then the past wasn’t. Facebook is too late here to receive the benefit of the doubt.

At Google’s I/O, we saw demos from Pichai showing how “our work on privacy and security is never done. And we want to do more to stay ahead of constantly evolving user expectations.” Instead of waiting to fall so far behind that users demand more privacy, Google has been steadily working on it for the past decade since it introduced Chrome incognito mode. It’s changed directions away from using Gmail content to target ads and allowing any developer to request access to your email. And now when the company is hit with scandals, it’s typically over its frightening efficiency as with its cancelled Project Maven AI military tech, not its creepiness.

Google made more progress on privacy in low-key updates in the runup to I/O than Facebook did on stage. In the past month it launched the ability to use your Android device as a physical security key, and a new auto-delete feature rolling out in the coming weeks that erases your web and app activity after 3 or 18 months. Then in its keynote today, it published “privacy commitments” for Made By Google products like Nest detailing exactly how they use your data and your control over that. For example, the new Nest Home Max does all its Face Match processing on device so facial recognition data isn’t sent to Google. Failing to note there’s a microphone in its Nest security alarm did cause an uproar in February, but the company has already course-corrected

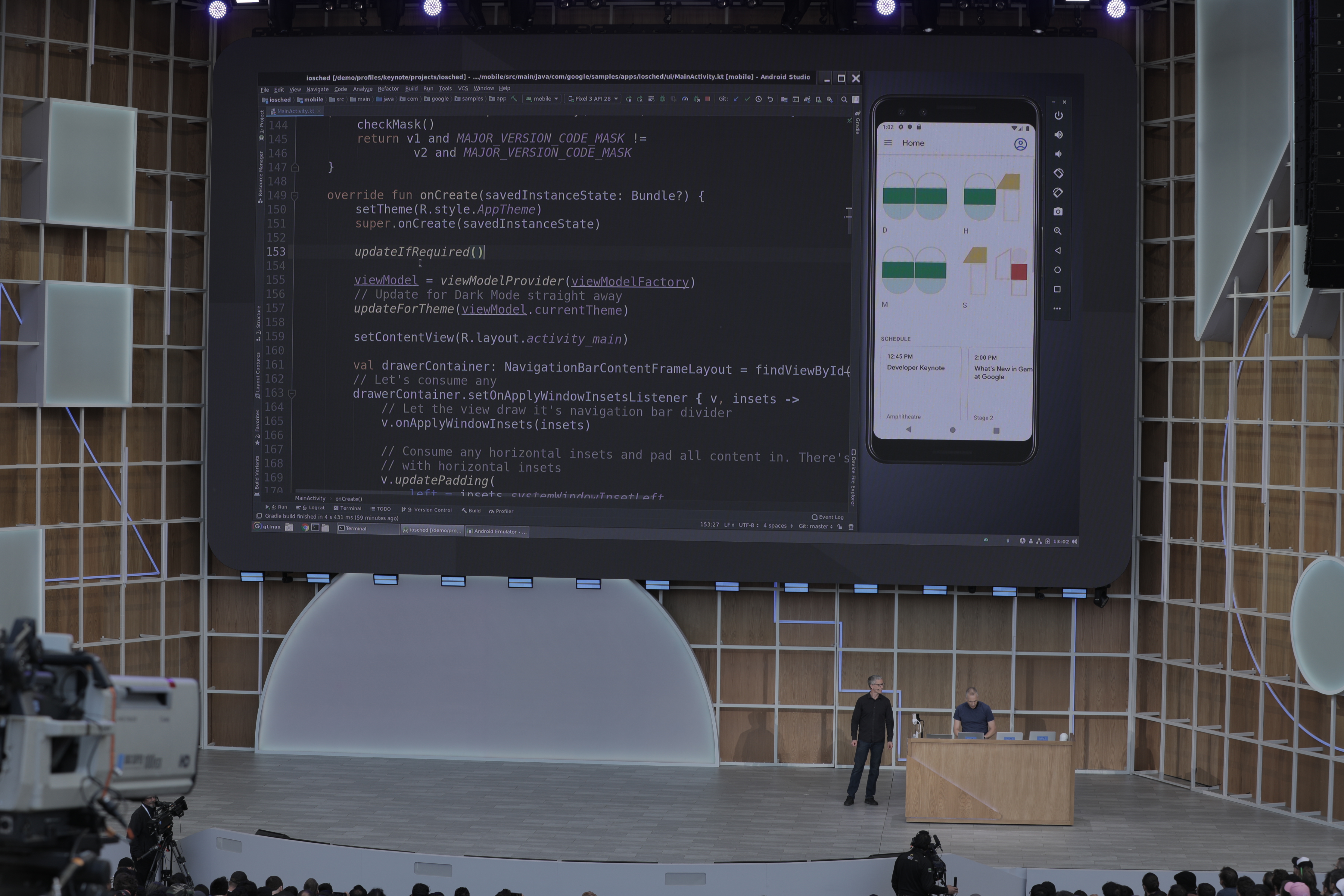

That concept of on-device processing is a hallmark of the new Android 10 Q operating system. Opening in beta to developers today, it comes with almost 50 new security and privacy features like TLS 1.3 support and Mac address randomization. Google Assistant will now be better protected, Pichai told a cheering crowd. “Further advances in deep learning have allowed us to combine and shrink the 100 gigabyte models down to half a gigabyte — small enough to bring it onto mobile devices.” This makes Assistant not only more private, but fast enough that it’s quicker to navigate your phone by voice than touch. Here, privacy and utility intertwine.

The result is that Google can listen to video chats and caption them for you in real-time, transcribe in-person conversations, or relay aloud your typed responses to a phone call without transmitting audio data to the cloud. That could be a huge help if you’re hearing or vision impaired, or just have your hands full. A lot of the new Assistant features coming to Google Pixel phones this year will even work in Airplane mode. Pichai says that “Gboard is already using federated learning to improve next word prediction, as well as emoji prediction across 10s of millions of devices” by using on-phone processing so only improvements to Google’s AI are sent to the company, not what you typed.

Google’s senior director of Android Stephanie Cuthbertson hammered the idea home, noting that “On device machine learning powers everything from these incredible breakthroughs like Live Captions to helpful everyday features like Smart Reply. And it does this with no user input ever leaving the phone, all of which protects user privacy.” Apple pioneered much of the on-device processing, and many Google features still rely on cloud computing, but it’s swiftly progressing.

When Google does make privacy announcements about things that aren’t about to ship, they’re significant and will be worth the wait. Chrome will implement anti-fingerprinting tech and change cookies to be more private so only the site that created them can use them. And Incognito Mode will soon come to the Google Maps and Search apps.

Pichai didn’t have to rely on grand proclamations, cringey jokes, or imaginary product changes to get his message across. Privacy isn’t just a means to an end for Google. It’s not a PR strategy. And it’s not some theoretical part of tomorrow like it is for Zuckerberg and Facebook. It’s now a natural part of building user-first technology…after 20 years of more cavalier attitudes towards data. That new approach is why the company dedicated to organizing the world’s information is getting so little backlash.

With privacy, it’s all about show, don’t tell.