A report by campaign group Avaaz examining how Facebook’s platform is being used to spread hate speech in the Assam region of North East India suggests the company is once again failing to prevent its platform from being turned into a weapon to fuel ethnic violence.

Assam has a long-standing Muslim minority population but ethnic minorities in the state look increasingly vulnerable after India’s Hindu nationalist government pushed forward with a National Register of Citizens (NRC), which has resulted in the exclusion from that list of nearly 1.9 million people — mostly Muslims — putting them at risk of statelessness.

In July the United Nations expressed grave concern over the NRC process, saying there’s a risk of arbitrary expulsion and detention, with those those excluded being referred to Foreigners’ Tribunals where they have to prove they are not “irregular”.

At the same time, the UN warned of the rise of hate speech in Assam being spread via social media — saying this is contributing to increasing instability and uncertainty for millions in the region. “This process may exacerbate the xenophobic climate while fuelling religious intolerance and discrimination in the country,” it wrote.

There’s an awful sense of deja-vu about these warnings. In March 2018 the UN criticized Facebook for failing to prevent its platform being used to fuel ethnic violence against the Rohingya people in the neighboring country of Myanmar — saying the service had played a “determining role” in that crisis.

Facebook’s response to devastating criticism from the UN looks like wafer-thin crisis PR to paper over the ethical cracks in its ad business, given the same sorts of alarm bells are being sounded again, just over a year later. (If we measure the company by the lofty goals it attached to a director of human rights policy job last year — when Facebook wrote that the responsibilities included “conflict prevention” and “peace-building” — it’s surely been an abject failure.)

Avaaz’s report on hate speech in Assam takes direct aim at Facebook’s platform, saying it’s being used as a conduit for whipping up anti-Muslim hatred.

In the report, entitled Megaphone for Hate: Disinformation and Hate Speech on Facebook During Assam’s Citizenship Count, the group says it analysed 800 Facebook posts and comments relating to Assam and the NRC, using keywords from the immigration discourse in Assamese, assessing them against the three tiers of prohibited hate speech set out in Facebook’s Community Standards.

Avaaz found that at least 26.5% of the posts and comments constituted hate speech. These posts had been shared on Facebook more than 99,650 times — adding up to at least 5.4 million views for violent hate speech targeting religious and ethnic minorities, according to its analysis.

Bengali Muslims are a particular target on Facebook in Assam, per the report, which found comments referring to them as “criminals,” “rapists,” “terrorists,” “pigs,” and “dogs”, among other dehumanizing terms.

In further disturbing comments there were calls for people to “poison” daughters, and legalise female foeticide, as well as several posts urging “Indian” women to be protected from “rape-obsessed foreigners”.

Avaaz suggests its findings are just a drop in the ocean of hate speech that it says is drowning Assam via Facebook and other social media. But it accuses Facebook directly of failing to provide adequate human resource to police hate speech spread on its dominant platform.

Commenting in a statement, Alaphia Zoyab, senior campaigner, said: “Facebook is being used as a megaphone for hate, pointed directly at vulnerable minorities in Assam, many of whom could be made stateless within months. Despite the clear and present danger faced by these people, Facebook is refusing to dedicate the resources required to keep them safe. Through its inaction, Facebook is complicit in the persecution of some of the world’s most vulnerable people.”

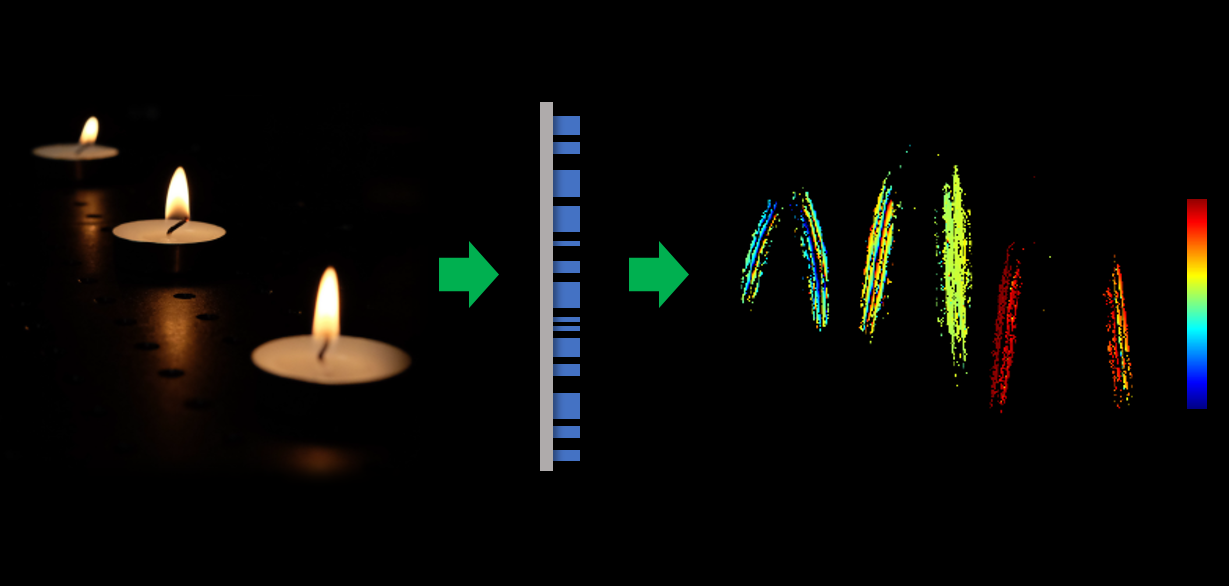

Its key complaint is that Facebook continues to rely on AI to detect hate speech which has not been reported to it by human users — using its limited pool of (human) content moderator staff to review pre-flagged content, rather than proactively detect it.

Facebook founder Mark Zuckerberg has previously said AI has a very long way to go to reliably detect hate speech. Indeed, he’s suggested it may never be able to do that.

In April 2018 he told US lawmakers it might take five to ten years to develop “AI tools that can get into some of the linguistic nuances of different types of content to be more accurate, to be flagging things to our systems”, while admitting: “Today we’re just not there on that.”

That sums to an admission that in regions such as Assam — where inter-ethnic tensions are being whipped up in a politically charged atmosphere that’s also encouraging violence — Facebook is essentially asleep on the job. The job of enforcing its own ‘Community Standards’ and preventing its platform being weaponized to amplify hate and harass the vulnerable, to be clear.

Avaaz says it flagged 213 of “the clearest examples” of hate speech which it found directly to Facebook — including posts from an elected official and pages of a member of an Assamese rebel group banned by the Indian Government. The company removed 96 of these posts following its report.

It argues there are similarities in the type of hate speech being directed at ethnic minorities in Assam via Facebook and that which targeted at Rohingya people in Myanmar, also on Facebook, while noting that the context is different. But it did also find hateful content on Facebook targeting Rohingya people in India.

It is calling on Facebook to do more to protect vulnerable minorities in Assam, arguing it should not rely solely on automated tools for detecting hate speech — and should instead apply a “human-led ‘zero tolerance’ policy” against hate speech, starting by beefing up moderators’ expertise in local languages.

It also recommends Facebook launch an early warning system within its Strategic Response team, again based on human content moderation — and do so for all regions where the UN has warned of the rise of hate speech on social media.

“This system should act preventatively to avert human rights crises, not just reactively to respond to offline harm that has already occurred,” it writes.

Other recommendations include that Facebook should correct the record on false news and disinformation by notifying and providing corrections from fact-checkers to each and every user who has seen content deemed to have been false or purposefully misleading, including if the disinformation came from a politician; that it should be transparent about all page and post takedowns by publishing its rational on the Facebook Newsroom so the issue of hate speech is given proportionate prominence and publicity to the size of the problem on Facebook; and it should agree to an independent audit of hate speech and human rights on its platform in India.

“Facebook has signed up to comply with the UN Guiding Principles on Business and Human Rights,” Avaaz notes. “Which require it to conduct human rights due diligence such as identifying its impact on vulnerable groups like women, children, linguistic, ethnic and religious minorities and others, particularly when deploying AI tools to identify hate speech, and take steps to subsequently avoid or mitigate such harm.”

We reached out to Facebook with a series of questions about Avaaz’s report and also how it has progressed its approach to policing inter-ethnic hate speech since the Myanmar crisis — including asking for details of the number of people it employs to monitor content in the region.

Facebook did not provide responses to our specific questions. It just said it does have content reviewers who are Assamese and who review content in the language, as well as reviewers who have knowledge of the majority of official languages in India, including Assamese, Hindi, Tamil, Telugu, Kannada, Punjabi, Urdu, Bengali and Marathi.

In 2017 India overtook the US as the country with the largest “potential audience” for Facebook ads, with 241M active users, per figures it reports the advertisers.

Facebook also sent us this statement, attributed to a spokesperson:

We want Facebook to be a safe place for all people to connect and express themselves, and we seek to protect the rights of minorities and marginalized communities around the world, including in India. We have clear rules against hate speech, which we define as attacks against people on the basis of things like caste, nationality, ethnicity and religion, and which reflect input we received from experts in India. We take this extremely seriously and remove content that violates these policies as soon as we become aware of it. To do this we have invested in dedicated content reviewers, who have local language expertise and an understanding of the India’s longstanding historical and social tensions. We’ve also made significant progress in proactively detecting hate speech on our services, which helps us get to potentially harmful content faster.

But these tools aren’t perfect yet, and reports from our community are still extremely important. That’s why we’re so grateful to Avaaz for sharing their findings with us. We have carefully reviewed the content they’ve flagged, and removed everything that violated our policies. We will continue to work to prevent the spread of hate speech on our services, both in India and around the world.

Facebook did not tell us exactly how many people it employs to police content for an Indian state with a population of more than 30 million people.

Globally the company maintains it has around 35,000 people working on trust and safety, less than half of whom (~15,000) are dedicated content reviewers. But with such a tiny content reviewer workforce for a global platform with 2.2BN+ users posting night and day all around the world there’s no plausible no way for it to stay on top of its hate speech problem.

Certainly not in every market it operates in. Which is why Facebook leans so heavily on AI — shrinking the cost to its business but piling content-related risk onto everyone else.

Facebook claims its automated tools for detecting hate speech have got better, saying that in Q1 this year it increased the proactive detection rate for hate speech to 65.4% — up from 58.8% in Q4 2017 and 38% in Q2 2017.

However it also says it only removed 4 million pieces of hate speech globally in Q1. Which sounds incredibly tiny vs the size of Facebook’s platform and the volume of content that will be generated daily by its millions and millions of active users.

Without tools for independent researchers to query the substance and spread of content on Facebook’s platform it’s simply not possible to know how many pieces of hate speech are going undetected. But — to be clear — this unregulated company still gets to mark its own homework.

In just one example of how Facebook is able to shrink perception of the volume of problematic content it’s fencing, of the 213 pieces of content related to Assam and the NCR that Avaaz judged to be hate speech and reported to Facebook it removed less than half (96).

Yet Facebook also told us it takes down all content that violates its community standards — suggesting it is applying a far more dilute definition of hate speech than Avaaz. Unsurprising for a US company whose nascent crisis PR content review board‘s charter includes the phrase “free expression is paramount”. But for a company that also claims to want to prevent conflict and peace-build it’s rather conflicted, to say the least.

As things stand, Facebook’s self-reported hate speech performance metrics are meaningless. It’s impossible for anyone outside the company to quantify or benchmark platform data. Because no one except Facebook has the full picture — and it’s not opening its platform for ethnical audit. Even as the impacts of harmful, hateful stuff spread on Facebook continue to bleed out and damage lives around the world.